Paper List

-

Autonomous Agents Coordinating Distributed Discovery Through Emergent Artifact Exchange

This paper addresses the fundamental limitation of current AI-assisted scientific research by enabling truly autonomous, decentralized investigation w...

-

D-MEM: Dopamine-Gated Agentic Memory via Reward Prediction Error Routing

This paper addresses the fundamental scalability bottleneck in LLM agentic memory systems: the O(N²) computational complexity and unbounded API token ...

-

Countershading coloration in blue shark skin emerges from hierarchically organized and spatially tuned photonic architectures inside skin denticles

This paper solves the core problem of how blue sharks achieve their striking dorsoventral countershading camouflage, revealing that coloration origina...

-

Human-like Object Grouping in Self-supervised Vision Transformers

This paper addresses the core challenge of quantifying how well self-supervised vision models capture human-like object grouping in natural scenes, br...

-

Hierarchical pp-Adic Framework for Gene Regulatory Networks: Theory and Stability Analysis

This paper addresses the core challenge of mathematically capturing the inherent hierarchical organization and multi-scale stability of gene regulator...

-

Towards unified brain-to-text decoding across speech production and perception

This paper addresses the core challenge of developing a unified brain-to-text decoding framework that works across both speech production and percepti...

-

Dual-Laws Model for a theory of artificial consciousness

This paper addresses the core challenge of developing a comprehensive, testable theory of consciousness that bridges biological and artificial systems...

-

Pulse desynchronization of neural populations by targeting the centroid of the limit cycle in phase space

This work addresses the core challenge of determining optimal pulse timing and intensity for desynchronizing pathological neural oscillations when the...

On the Approximation of Phylogenetic Distance Functions by Artificial Neural Networks

Indiana University, Bloomington, IN 47405, USA

30秒速读

IN SHORT: This paper addresses the core challenge of developing computationally efficient and scalable neural network architectures that can learn accurate phylogenetic distance functions from simulated data, bridging the gap between simple distance methods and complex model-based inference.

核心创新

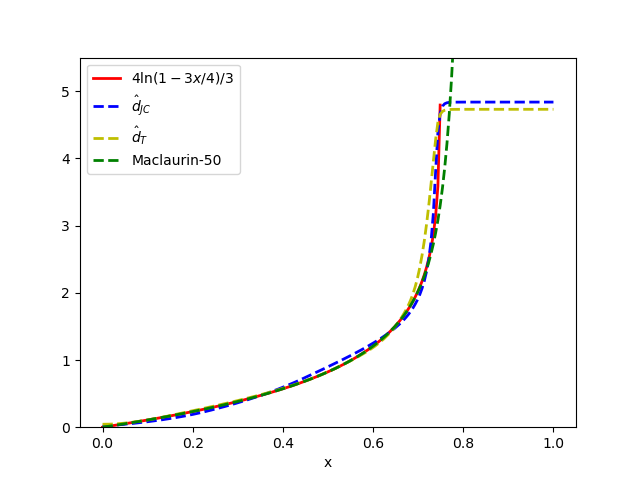

- Methodology Introduces minimal, permutation-invariant neural architectures (Sequence networks S and Pair networks P) specifically designed to approximate phylogenetic distance functions, ensuring invariance to taxa ordering without costly data augmentation.

- Methodology Leverages theoretical results from metric embedding (Bourgain's theorem, Johnson-Lindenstrauss Lemma) to inform network design, explicitly linking embedding dimension to the number of taxa for efficient representation.

- Methodology Demonstrates how equivariant layers and attention mechanisms can be structured to handle both i.i.d. and spatially correlated sequence data (e.g., models with indels or rate variation), adapting to the complexity of the generative evolutionary model.

主要结论

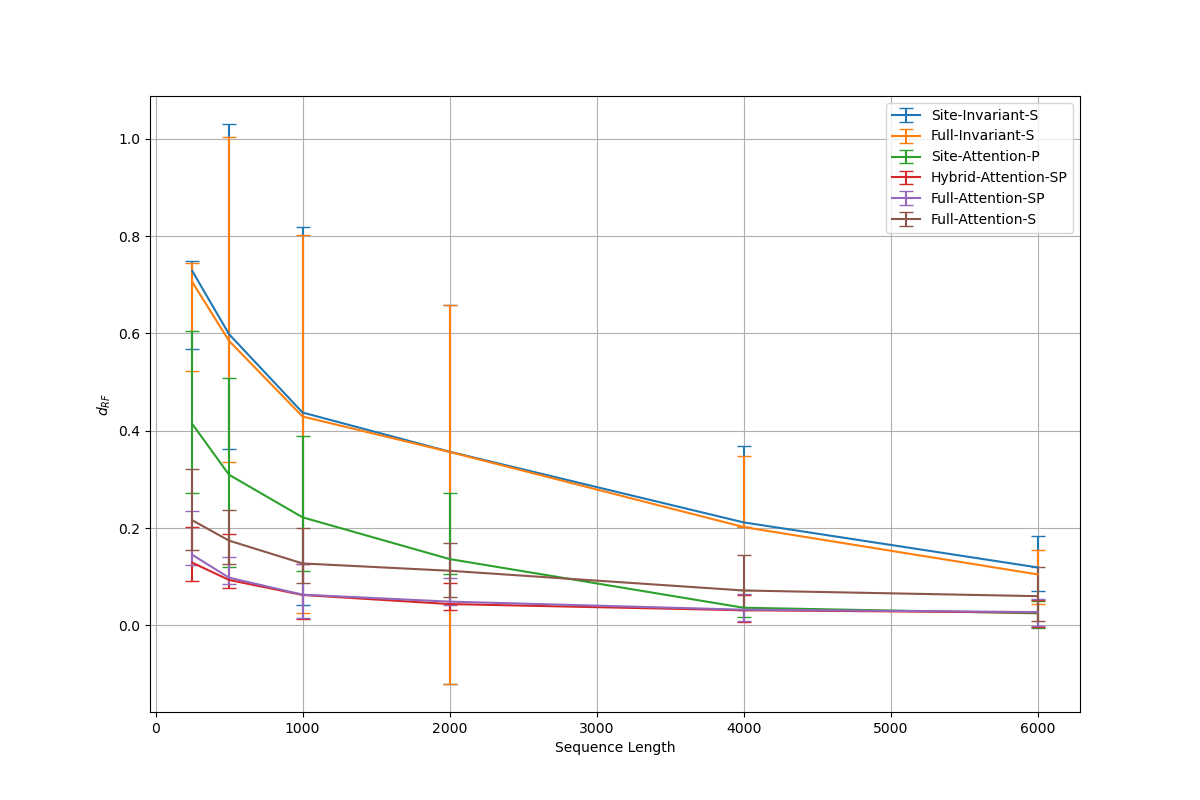

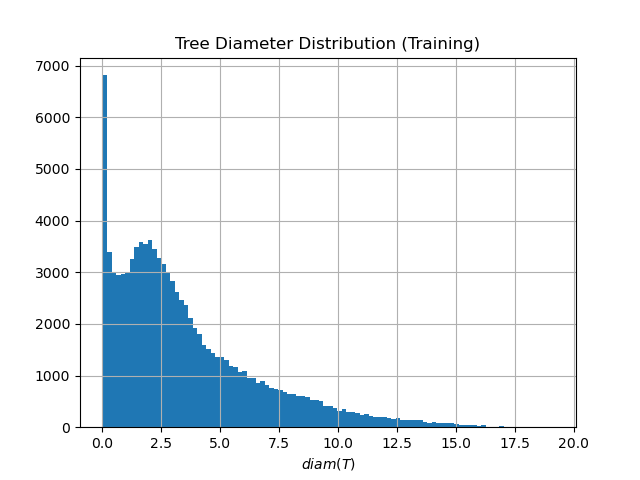

- The proposed minimal architectures (e.g., Sites-Invariant-S with ~7.6K parameters) achieve results comparable to state-of-the-art inference methods like IQ-TREE on simulated data under various models (JC, K2P, HKY, LG+indels), outperforming classic pairwise distance methods (d_H, d_JC, d_K2P) in most conditions.

- Architectures incorporating taxa-wise attention, while more memory-intensive, are necessary for complex evolutionary models with spatial dependencies; however, simpler networks suffice for simpler i.i.d. models, indicating an architecture-evolutionary model correspondence.

- Performance is highly sensitive to hyperparameters: validation error increases sharply with fewer than 4 attention heads or with hidden channel counts outside an optimal range (e.g., 32-128), aligning with theoretical requirements for learning graph-structured data.

摘要: Inferring the phylogenetic relationships among a sample of organisms is a fundamental problem in modern biology. While distance-based hierarchical clustering algorithms achieved early success on this task, these have been supplanted by Bayesian and maximum likelihood search procedures based on complex models of molecular evolution. In this work we describe minimal neural network architectures that can approximate classic phylogenetic distance functions and the properties required to learn distances under a variety of molecular evolutionary models. In contrast to model-based inference (and recently proposed model-free convolutional and transformer networks), these architectures have a small computational footprint and are scalable to large numbers of taxa and molecular characters. The learned distance functions generalize well and, given an appropriate training dataset, achieve results comparable to state-of-the art inference methods.