Paper List

-

Developing the PsyCogMetrics™ AI Lab to Evaluate Large Language Models and Advance Cognitive Science

This paper addresses the critical gap between sophisticated LLM evaluation needs and the lack of accessible, scientifically rigorous platforms that in...

-

Equivalence of approximation by networks of single- and multi-spike neurons

This paper resolves the fundamental question of whether single-spike spiking neural networks (SNNs) are inherently less expressive than multi-spike SN...

-

The neuroscience of transformers

提出了Transformer架构与皮层柱微环路之间的新颖计算映射,连接了现代AI与神经科学。

-

Framing local structural identifiability and observability in terms of parameter-state symmetries

This paper addresses the core challenge of systematically determining which parameters and states in a mechanistic ODE model can be uniquely inferred ...

-

Leveraging Phytolith Research using Artificial Intelligence

This paper addresses the critical bottleneck in phytolith research by automating the labor-intensive manual microscopy process through a multimodal AI...

-

Neural network-based encoding in free-viewing fMRI with gaze-aware models

This paper addresses the core challenge of building computationally efficient and ecologically valid brain encoding models for naturalistic vision by ...

-

Scalable DNA Ternary Full Adder Enabled by a Competitive Blocking Circuit

This paper addresses the core bottleneck of carry information attenuation and limited computational scale in DNA binary adders by introducing a scalab...

-

ELISA: An Interpretable Hybrid Generative AI Agent for Expression-Grounded Discovery in Single-Cell Genomics

This paper addresses the critical bottleneck of translating high-dimensional single-cell transcriptomic data into interpretable biological hypotheses ...

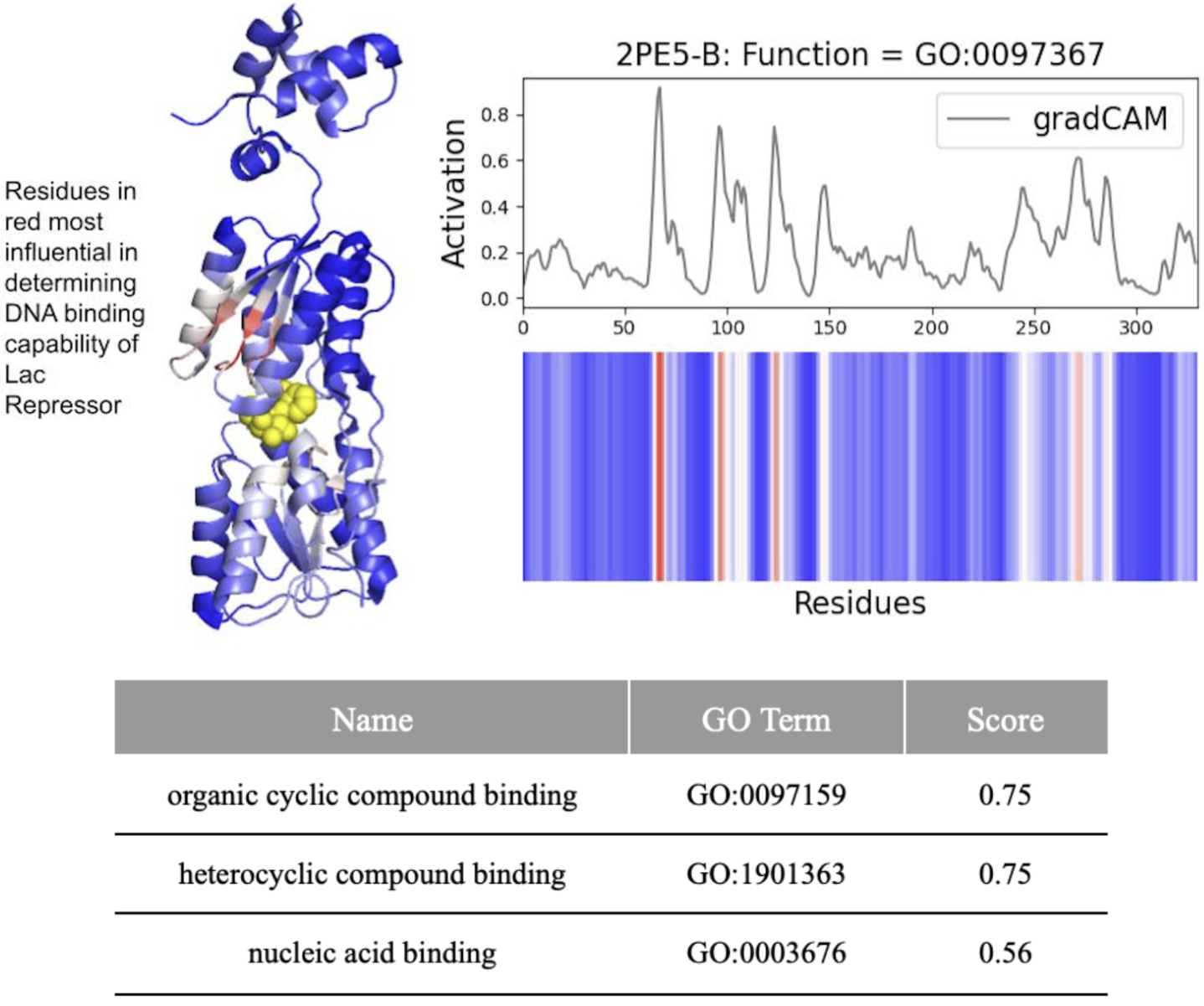

DeepFRI Demystified: Interpretability vs. Accuracy in AI Protein Function Prediction

Yale University | Microsoft

30秒速读

IN SHORT: This study addresses the critical gap between high predictive accuracy and biological interpretability in DeepFRI, revealing that the model often prioritizes structural motifs over functional residues, complicating reliable identification of drug targets.

核心创新

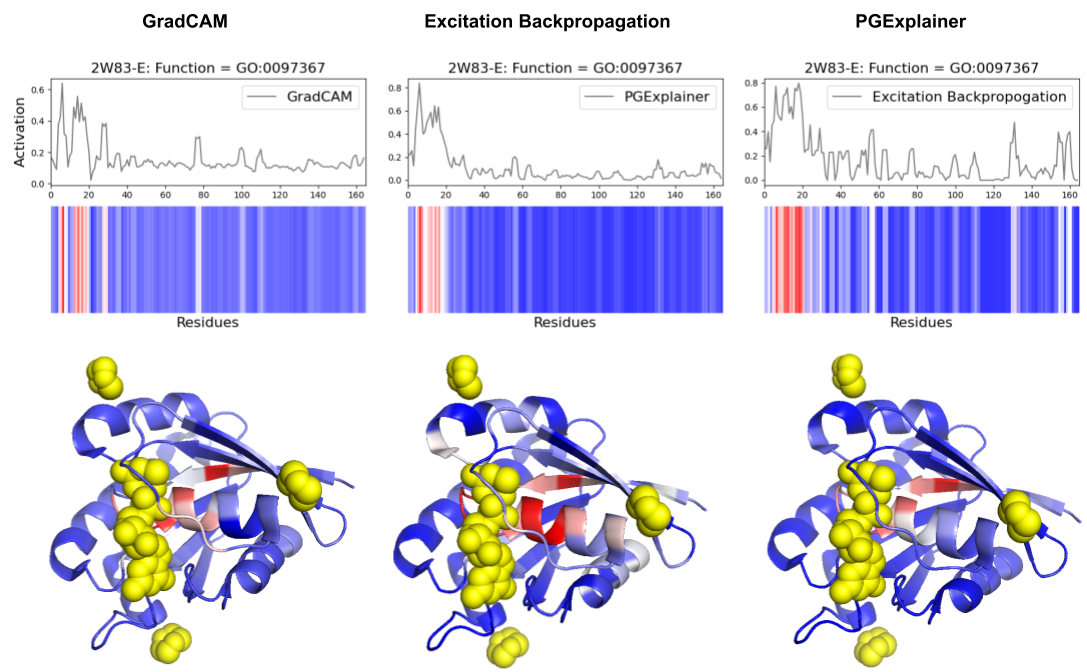

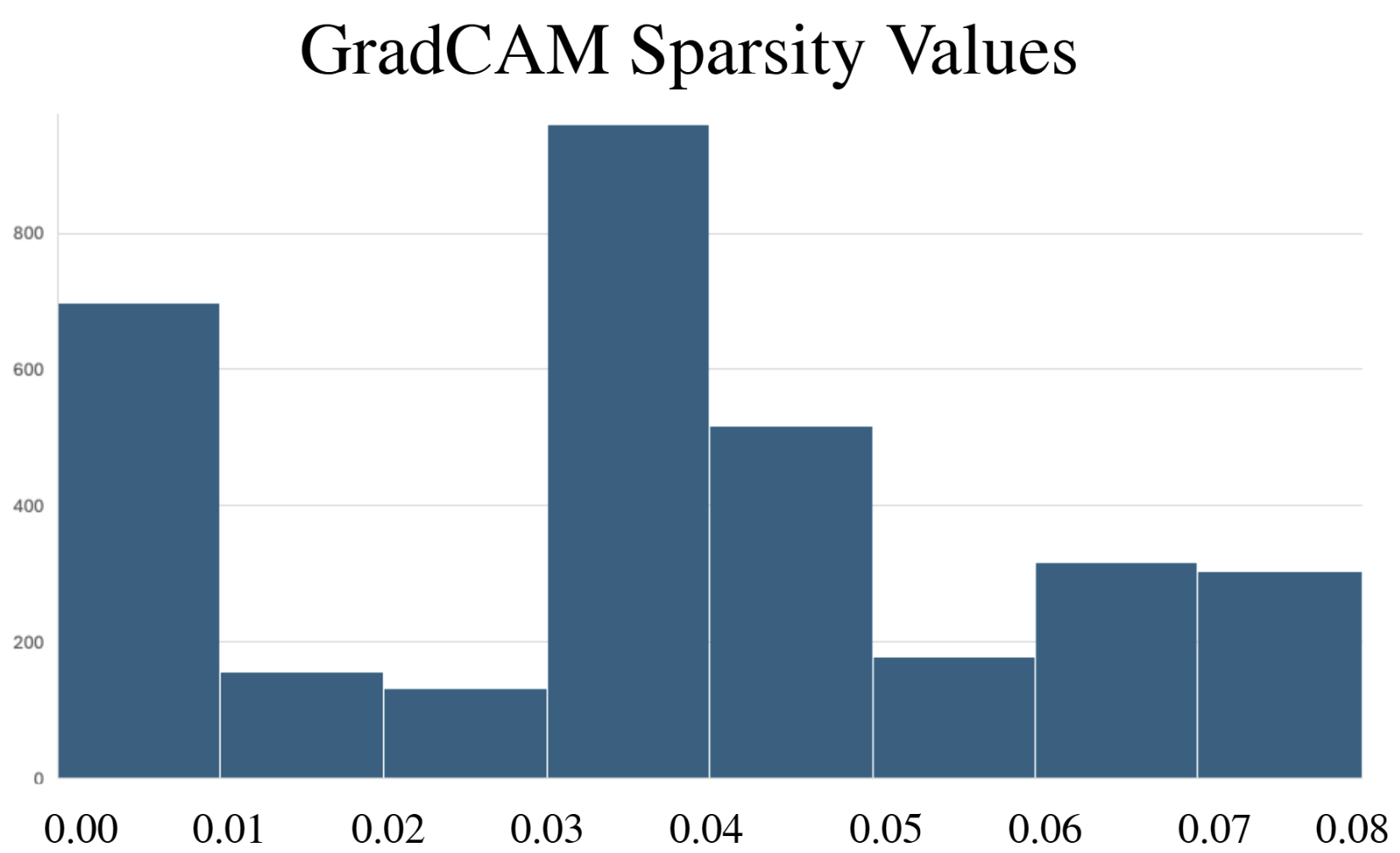

- Methodology Comprehensive benchmarking of three post-hoc explainability methods (GradCAM, Excitation Backpropagation, PGExplainer) on DeepFRI with quantitative sparsity analysis.

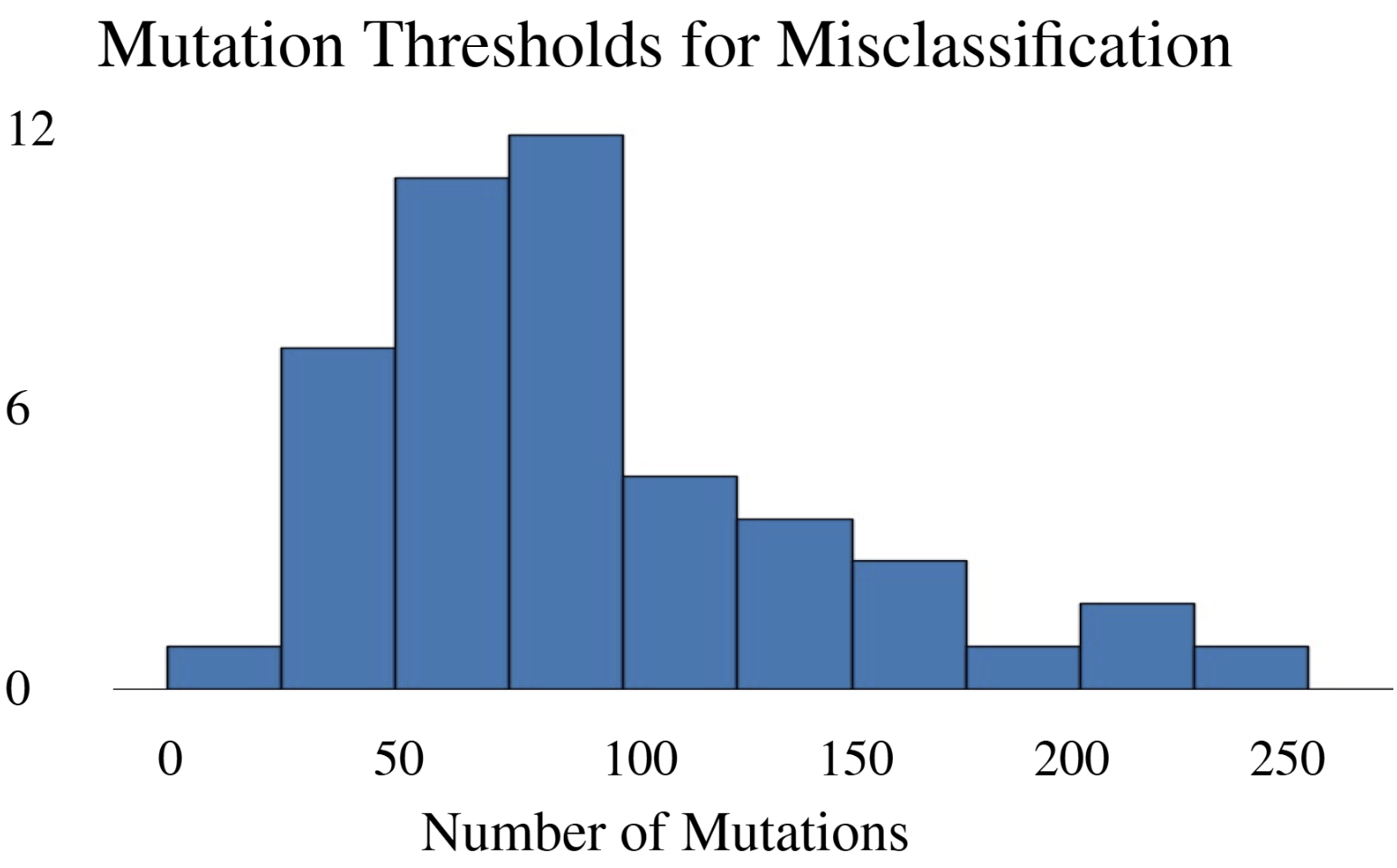

- Methodology Development of a modified DeepFool adversarial testing framework for protein sequences, measuring mutation thresholds required for misclassification.

- Biology Revealed that DeepFRI prioritizes amino acids controlling protein structure over function in >50% of tested proteins, highlighting a fundamental accuracy-interpretability trade-off.

主要结论

- DeepFRI required 206 mutations (62.4% of 330 residues) in the lac repressor for misclassification, demonstrating extreme robustness but potentially missing subtle functional alterations.

- Explainability methods showed significant granularity differences: PGExplainer was 3× sparser than GradCAM and 17× sparser than Excitation Backpropagation across 124 binding proteins.

- All three methods converged on biochemically critical P-loop residues (0-20) in ARF6 GTPase, validating DeepFRI's focus on conserved functional motifs in straightforward domains.

摘要: Machine learning technologies for protein function prediction are black box models. Despite their potential to identify key drug targets with high accuracy and accelerate therapy development, the adoption of these methods depends on verifying their findings. This study evaluates DeepFRI, a leading Graph Convolutional Network (GCN)-based tool, using advanced explainability techniques—GradCAM, Excitation Backpropagation, and PGExplainer—and adversarial robustness tests. Our findings reveal that the model’s predictions often prioritize conserved motifs over truly deterministic residues, complicating the identification of functional sites. Quantitative analyses show that explainability methods differ significantly in granularity, with GradCAM providing broad relevance and PGExplainer pinpointing specific active sites. These results highlight trade-offs between accuracy and interpretability, suggesting areas for improvement in DeepFRI’s architecture to enhance its trustworthiness in drug discovery and regulatory settings.