Paper List

-

Macroscopic Dominance from Microscopic Extremes: Symmetry Breaking in Spatial Competition

This paper addresses the fundamental question of how microscopic stochastic advantages in spatial exploration translate into macroscopic resource domi...

-

Linear Readout of Neural Manifolds with Continuous Variables

This paper addresses the core challenge of quantifying how the geometric structure of high-dimensional neural population activity (neural manifolds) d...

-

Theory of Cell Body Lensing and Phototaxis Sign Reversal in “Eyeless” Mutants of Chlamydomonas

This paper solves the core puzzle of how eyeless mutants of Chlamydomonas exhibit reversed phototaxis by quantitatively modeling the competition betwe...

-

Cross-Species Transfer Learning for Electrophysiology-to-Transcriptomics Mapping in Cortical GABAergic Interneurons

This paper addresses the challenge of predicting transcriptomic identity from electrophysiological recordings in human cortical interneurons, where li...

-

Uncovering statistical structure in large-scale neural activity with Restricted Boltzmann Machines

This paper addresses the core challenge of modeling large-scale neural population activity (1500-2000 neurons) with interpretable higher-order interac...

-

Realizing Common Random Numbers: Event-Keyed Hashing for Causally Valid Stochastic Models

This paper addresses the critical problem that standard stateful PRNG implementations in agent-based models violate causal validity by making random d...

-

A Standardized Framework for Evaluating Gene Expression Generative Models

This paper addresses the critical lack of standardized evaluation protocols for single-cell gene expression generative models, where inconsistent metr...

-

Single Molecule Localization Microscopy Challenge: A Biologically Inspired Benchmark for Long-Sequence Modeling

This paper addresses the core challenge of evaluating state-space models on biologically realistic, sparse, and stochastic temporal processes, which a...

PanFoMa: A Lightweight Foundation Model and Benchmark for Pan-Cancer

Extracted from affiliations in the content snippet (specific institutions not fully listed in provided text)

30秒速读

IN SHORT: This paper addresses the dual challenge of achieving computational efficiency without sacrificing accuracy in whole-transcriptome single-cell representation learning for pan-cancer analysis, moving beyond the limitations of pure Transformer or Mamba architectures.

核心创新

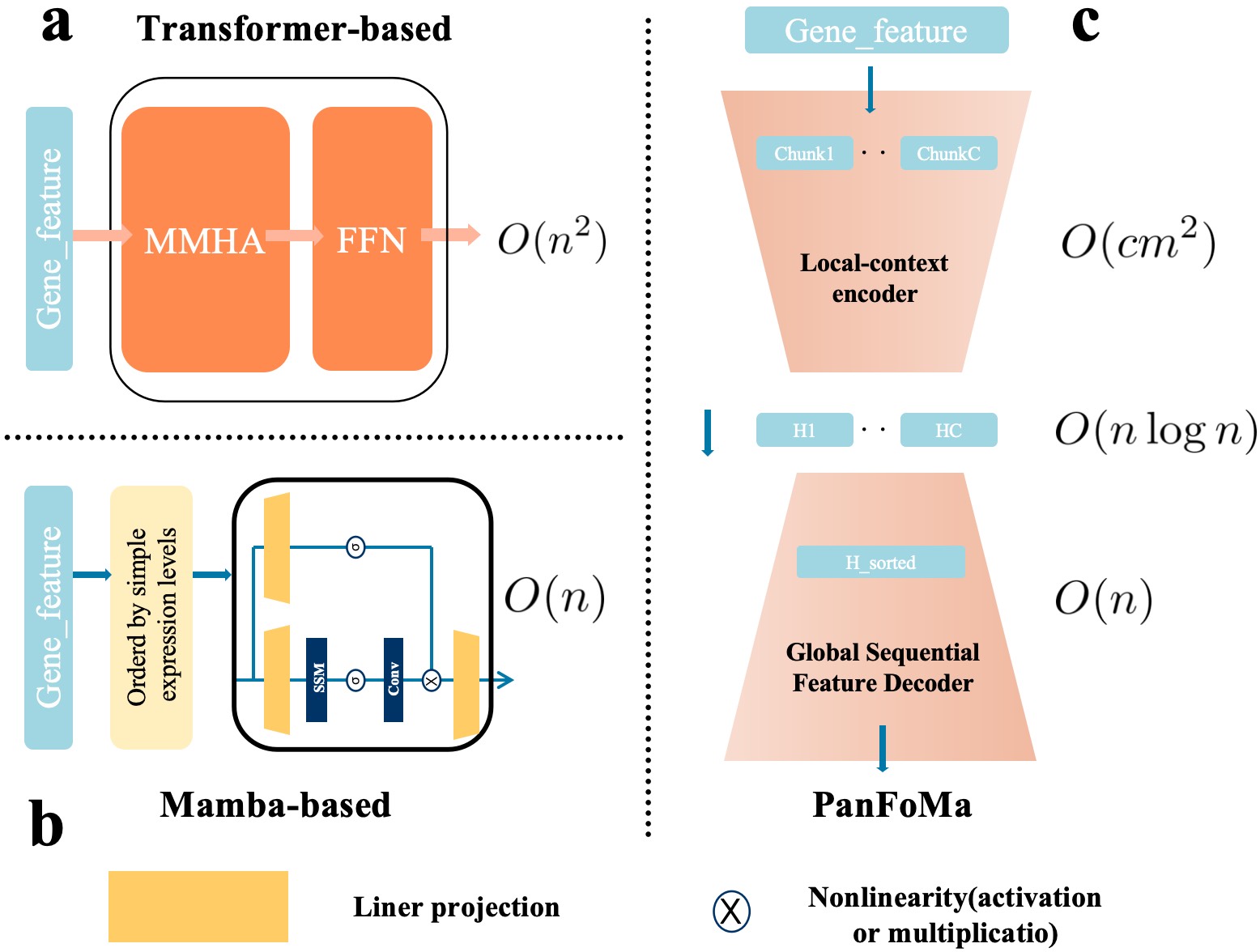

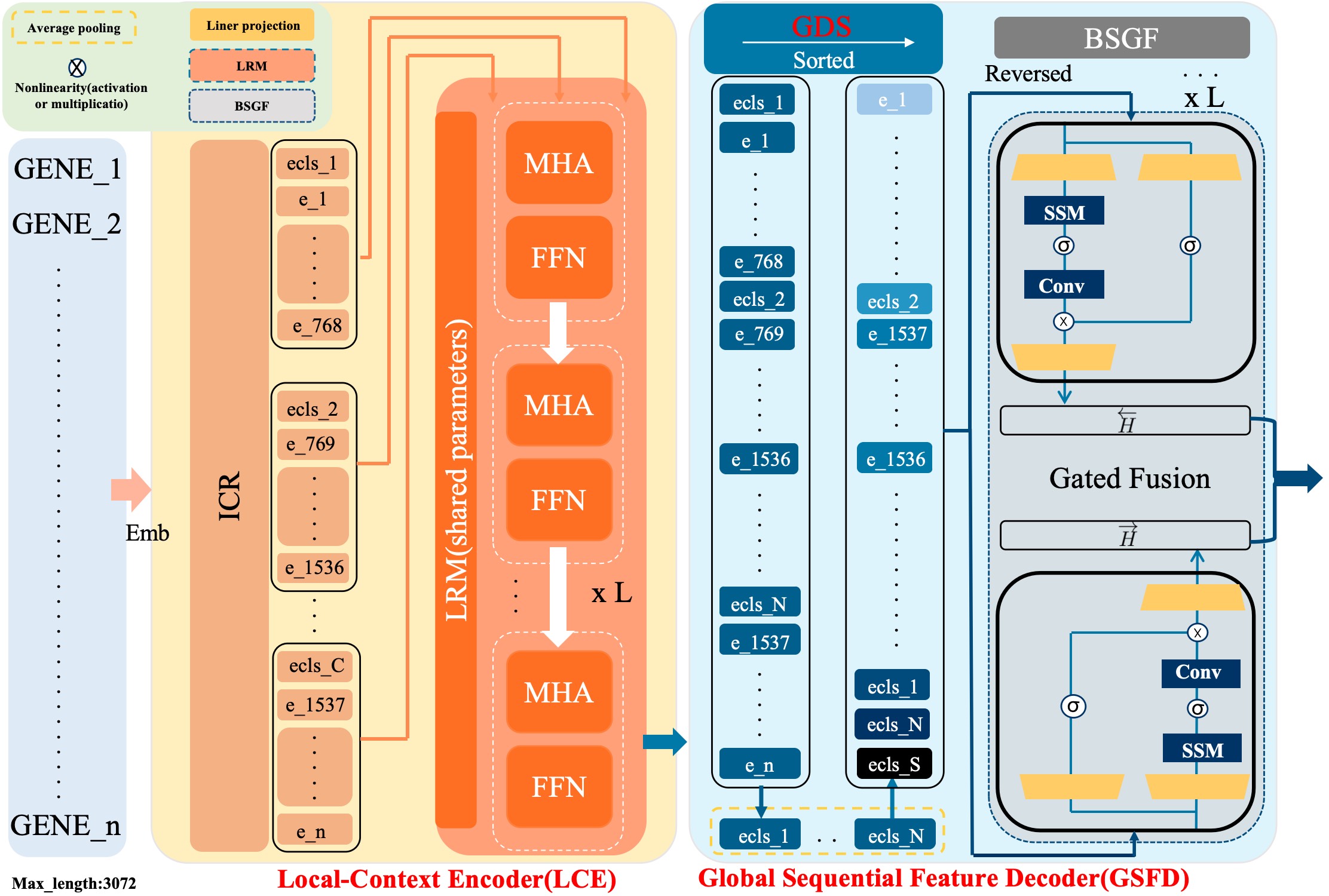

- Methodology Proposes a novel hybrid architecture (PanFoMa) that decouples local gene interaction modeling (via a lightweight, chunked Transformer encoder) from global context integration (via a bidirectional Mamba decoder), achieving O(C·M² + N log N) complexity.

- Methodology Introduces a Global-informed Dynamic Sorting (GDS) mechanism that adaptively orders genes for the Mamba decoder based on a learned global cell state vector, moving beyond static, heuristic gene ordering (e.g., by mean expression).

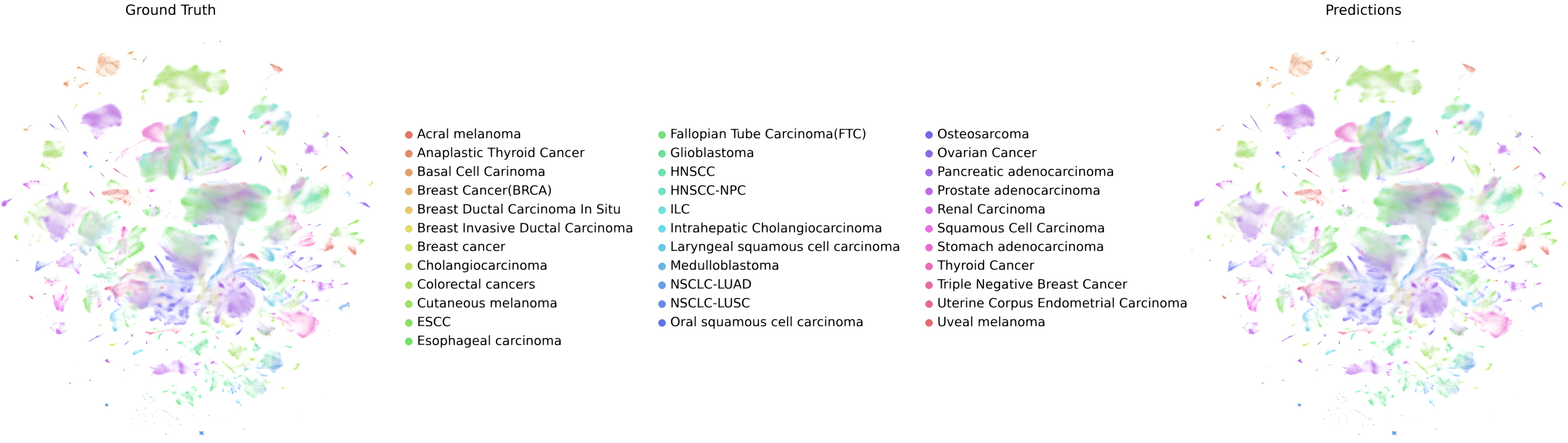

- Biology Constructs and releases PanFoMaBench, a large-scale, rigorously curated pan-cancer single-cell benchmark comprising over 3.5 million high-quality cells across 33 cancer subtypes from 23 tissues, addressing the lack of comprehensive evaluation resources.

主要结论

- PanFoMa achieves state-of-the-art pan-cancer classification accuracy of 94.74% (ACC) and 92.5% (Macro-F1) on PanFoMaBench, outperforming GeneFormer by +3.5% ACC and +4.0% F1.

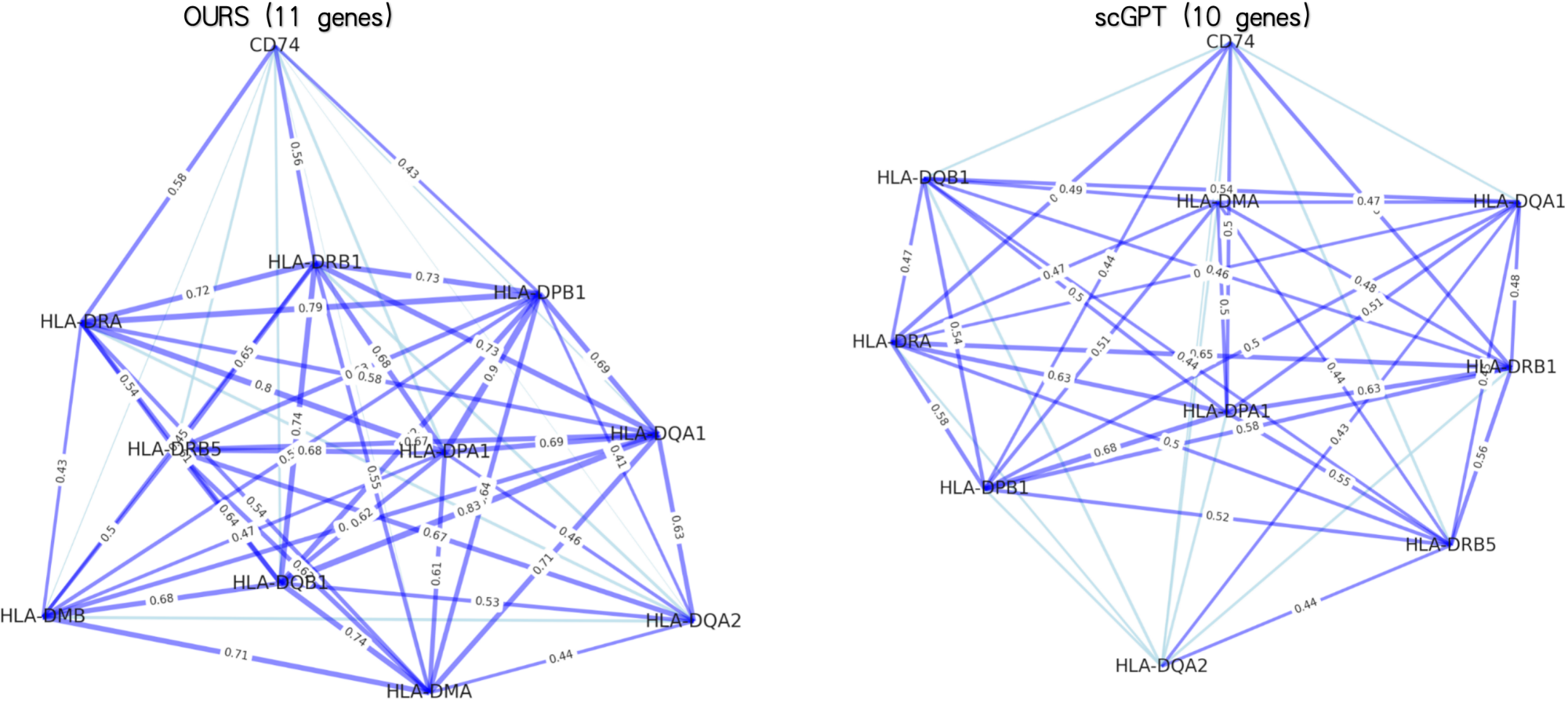

- The model demonstrates superior generalizability across foundational tasks, showing improvements of +7.4% in cell type annotation, +4.0% in batch integration, and +3.1% in multi-omics integration over baselines.

- The hybrid local-global design and dynamic sorting are validated as effective, enabling efficient processing of full transcriptome-scale data (~3000 genes) while capturing both fine-grained local interactions and broad global regulatory patterns.

摘要: Single-cell RNA sequencing (scRNA-seq) is essential for decoding tumor heterogeneity. However, pan-cancer research still faces two key challenges: learning discriminative and efficient single-cell representations, and establishing a comprehensive evaluation benchmark. In this paper, we introduce PanFoMa, a lightweight hybrid neural network that combines the strengths of Transformers and state-space models to achieve a balance between performance and efficiency. PanFoMa consists of a front-end local-context encoder with shared self-attention layers to capture complex, order-independent gene interactions; and a back-end global sequential feature decoder that efficiently integrates global context using a linear-time state-space model. This modular design preserves the expressive power of Transformers while leveraging the scalability of Mamba to enable transcriptome modeling, effectively capturing both local and global regulatory signals. To enable robust evaluation, we also construct a large-scale pan-cancer single-cell benchmark, PanFoMaBench, containing over 3.5 million high-quality cells across 33 cancer subtypes, curated through a rigorous preprocessing pipeline. Experimental results show that PanFoMa outperforms state-of-the-art models on our pan-cancer benchmark (+4.0%) and across multiple public tasks, including cell type annotation (+7.4%), batch integration (+4.0%) and multi-omics integration (+3.1%). The code is available at https://github.com/Xiaoshui-Huang/PanFoMa.