Paper List

-

Macroscopic Dominance from Microscopic Extremes: Symmetry Breaking in Spatial Competition

This paper addresses the fundamental question of how microscopic stochastic advantages in spatial exploration translate into macroscopic resource domi...

-

Linear Readout of Neural Manifolds with Continuous Variables

This paper addresses the core challenge of quantifying how the geometric structure of high-dimensional neural population activity (neural manifolds) d...

-

Theory of Cell Body Lensing and Phototaxis Sign Reversal in “Eyeless” Mutants of Chlamydomonas

This paper solves the core puzzle of how eyeless mutants of Chlamydomonas exhibit reversed phototaxis by quantitatively modeling the competition betwe...

-

Cross-Species Transfer Learning for Electrophysiology-to-Transcriptomics Mapping in Cortical GABAergic Interneurons

This paper addresses the challenge of predicting transcriptomic identity from electrophysiological recordings in human cortical interneurons, where li...

-

Uncovering statistical structure in large-scale neural activity with Restricted Boltzmann Machines

This paper addresses the core challenge of modeling large-scale neural population activity (1500-2000 neurons) with interpretable higher-order interac...

-

Realizing Common Random Numbers: Event-Keyed Hashing for Causally Valid Stochastic Models

This paper addresses the critical problem that standard stateful PRNG implementations in agent-based models violate causal validity by making random d...

-

A Standardized Framework for Evaluating Gene Expression Generative Models

This paper addresses the critical lack of standardized evaluation protocols for single-cell gene expression generative models, where inconsistent metr...

-

Single Molecule Localization Microscopy Challenge: A Biologically Inspired Benchmark for Long-Sequence Modeling

This paper addresses the core challenge of evaluating state-space models on biologically realistic, sparse, and stochastic temporal processes, which a...

On the Approximation of Phylogenetic Distance Functions by Artificial Neural Networks

Indiana University, Bloomington, IN 47405, USA

30秒速读

IN SHORT: This paper addresses the core challenge of developing computationally efficient and scalable neural network architectures that can learn accurate phylogenetic distance functions from simulated data, bridging the gap between simple distance methods and complex model-based inference.

核心创新

- Methodology Introduces minimal, permutation-invariant neural architectures (Sequence networks S and Pair networks P) specifically designed to approximate phylogenetic distance functions, ensuring invariance to taxa ordering without costly data augmentation.

- Methodology Leverages theoretical results from metric embedding (Bourgain's theorem, Johnson-Lindenstrauss Lemma) to inform network design, explicitly linking embedding dimension to the number of taxa for efficient representation.

- Methodology Demonstrates how equivariant layers and attention mechanisms can be structured to handle both i.i.d. and spatially correlated sequence data (e.g., models with indels or rate variation), adapting to the complexity of the generative evolutionary model.

主要结论

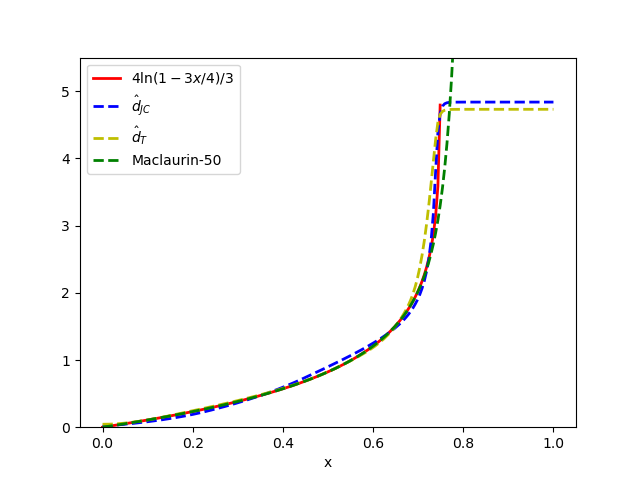

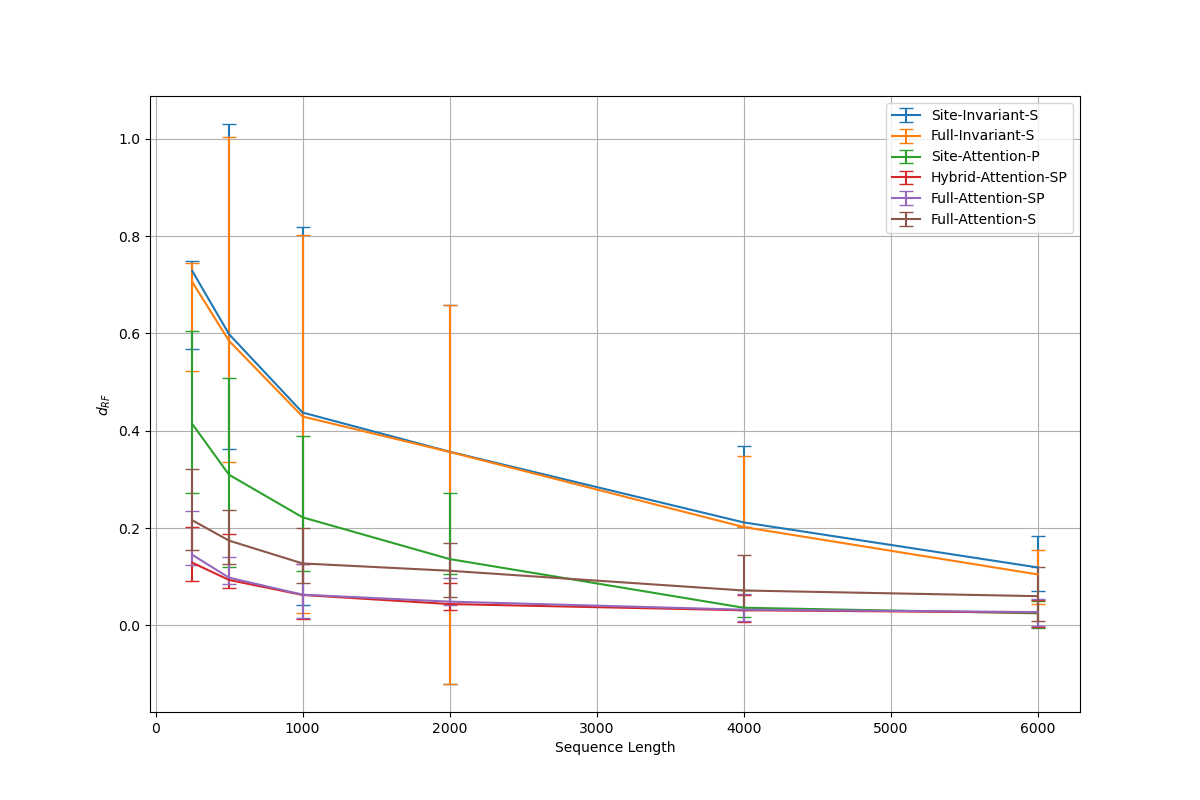

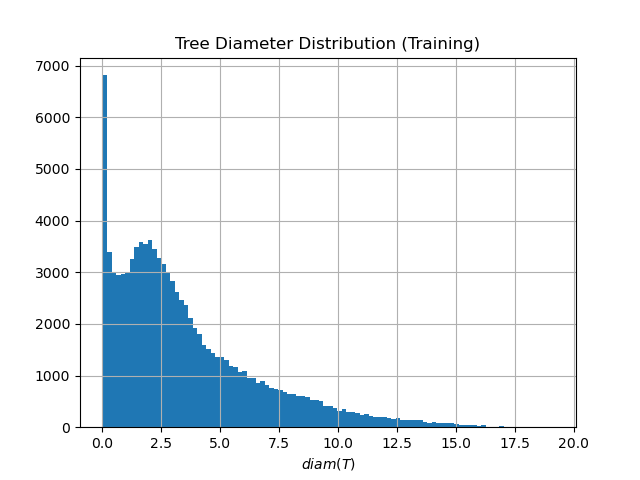

- The proposed minimal architectures (e.g., Sites-Invariant-S with ~7.6K parameters) achieve results comparable to state-of-the-art inference methods like IQ-TREE on simulated data under various models (JC, K2P, HKY, LG+indels), outperforming classic pairwise distance methods (d_H, d_JC, d_K2P) in most conditions.

- Architectures incorporating taxa-wise attention, while more memory-intensive, are necessary for complex evolutionary models with spatial dependencies; however, simpler networks suffice for simpler i.i.d. models, indicating an architecture-evolutionary model correspondence.

- Performance is highly sensitive to hyperparameters: validation error increases sharply with fewer than 4 attention heads or with hidden channel counts outside an optimal range (e.g., 32-128), aligning with theoretical requirements for learning graph-structured data.

摘要: Inferring the phylogenetic relationships among a sample of organisms is a fundamental problem in modern biology. While distance-based hierarchical clustering algorithms achieved early success on this task, these have been supplanted by Bayesian and maximum likelihood search procedures based on complex models of molecular evolution. In this work we describe minimal neural network architectures that can approximate classic phylogenetic distance functions and the properties required to learn distances under a variety of molecular evolutionary models. In contrast to model-based inference (and recently proposed model-free convolutional and transformer networks), these architectures have a small computational footprint and are scalable to large numbers of taxa and molecular characters. The learned distance functions generalize well and, given an appropriate training dataset, achieve results comparable to state-of-the art inference methods.