Paper List

-

Evolutionarily Stable Stackelberg Equilibrium

通过要求追随者策略对突变入侵具有鲁棒性,弥合了斯塔克尔伯格领导力模型与演化稳定性之间的鸿沟。

-

Recovering Sparse Neural Connectivity from Partial Measurements: A Covariance-Based Approach with Granger-Causality Refinement

通过跨多个实验会话累积协方差统计,实现从部分记录到完整神经连接性的重建。

-

Atomic Trajectory Modeling with State Space Models for Biomolecular Dynamics

ATMOS通过提供一个基于SSM的高效框架,用于生物分子的原子级轨迹生成,弥合了计算昂贵的MD模拟与时间受限的深度生成模型之间的差距。

-

Slow evolution towards generalism in a model of variable dietary range

通过证明是种群统计噪声(而非确定性动力学)驱动了模式形成和泛化食性的演化,解决了间接竞争下物种形成的悖论。

-

Grounded Multimodal Retrieval-Augmented Drafting of Radiology Impressions Using Case-Based Similarity Search

通过将印象草稿基于检索到的历史病例,并采用明确引用和基于置信度的拒绝机制,解决放射学报告生成中的幻觉问题。

-

Unified Policy–Value Decomposition for Rapid Adaptation

通过双线性分解在策略和价值函数之间共享低维目标嵌入,实现对新颖任务的零样本适应。

-

Mathematical Modeling of Cancer–Bacterial Therapy: Analysis and Numerical Simulation via Physics-Informed Neural Networks

提供了一个严格的、无网格的PINN框架,用于模拟和分析细菌癌症疗法中复杂的、空间异质的相互作用。

-

Sample-Efficient Adaptation of Drug-Response Models to Patient Tumors under Strong Biological Domain Shift

通过从无标记分子谱中学习可迁移表征,利用最少的临床数据实现患者药物反应的有效预测。

Setting up for failure: automatic discovery of the neural mechanisms of cognitive errors

Department of Engineering, University of Cambridge | Department of Psychology, University of Cambridge | Department of Cognitive Science, Central European University

30秒速读

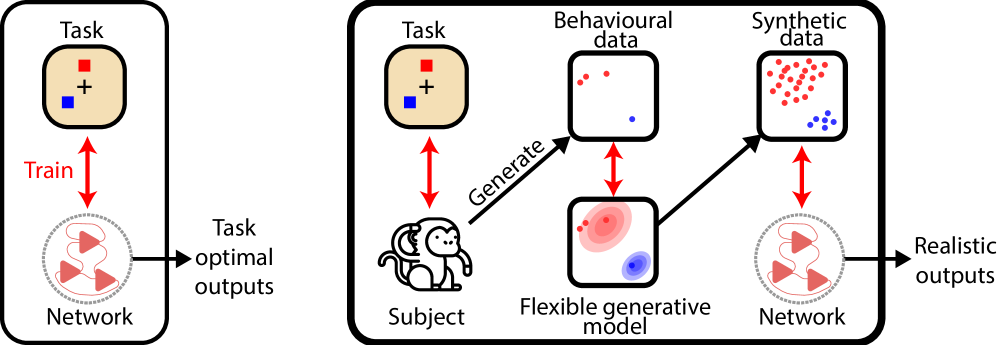

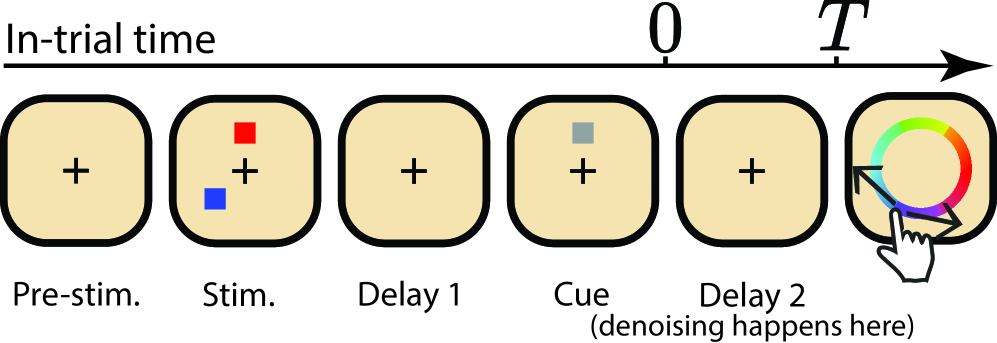

IN SHORT: This paper addresses the core challenge of automating the discovery of biologically plausible recurrent neural network (RNN) dynamics that can replicate the full richness of human and animal behavioral data, including characteristic errors and suboptimalities, rather than just optimal task performance.

核心创新

- Methodology Introduces a novel diffusion model-based training objective for RNNs to capture complex, multimodal behavioral response distributions (e.g., from swap errors), moving beyond traditional moment-matching or simple loss functions like MSE.

- Methodology Proposes using a non-parametric generative model (Bayesian Non-parametric model of Swap errors, BNS) to create surrogate behavioral data for training, overcoming the data scarcity problem inherent in experimental neuroscience.

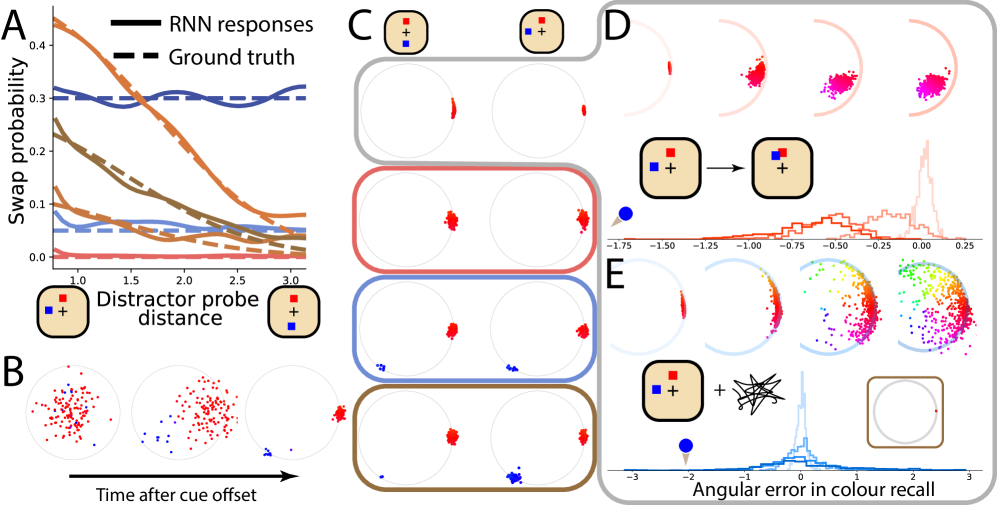

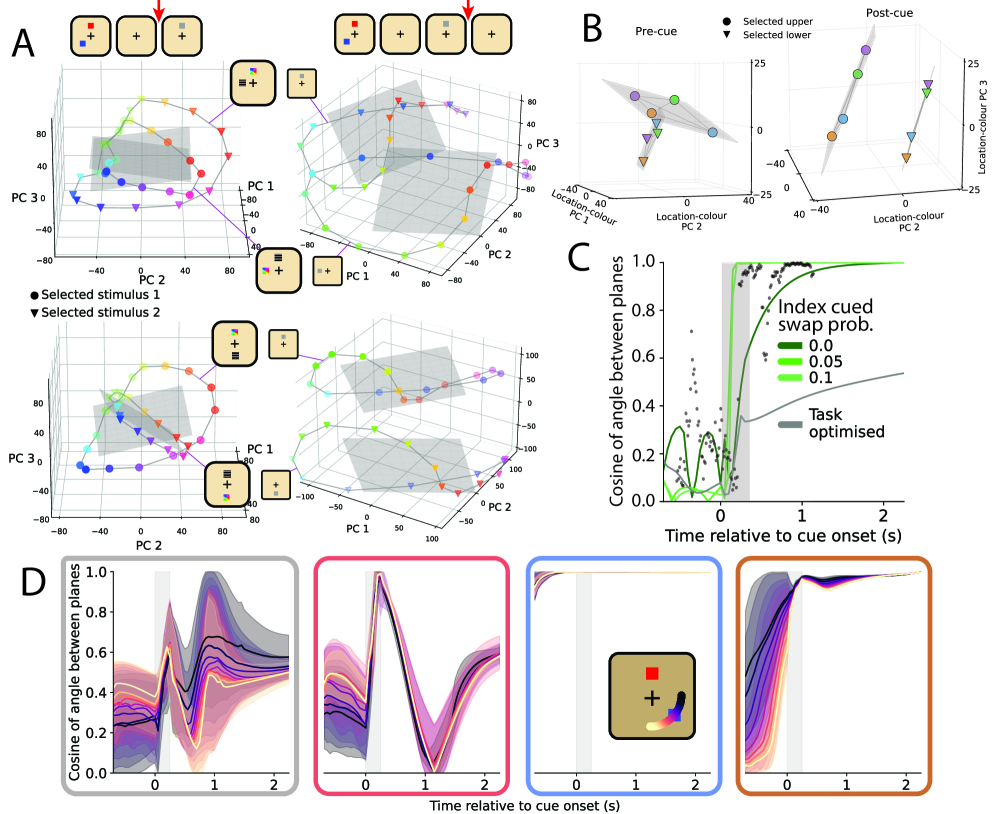

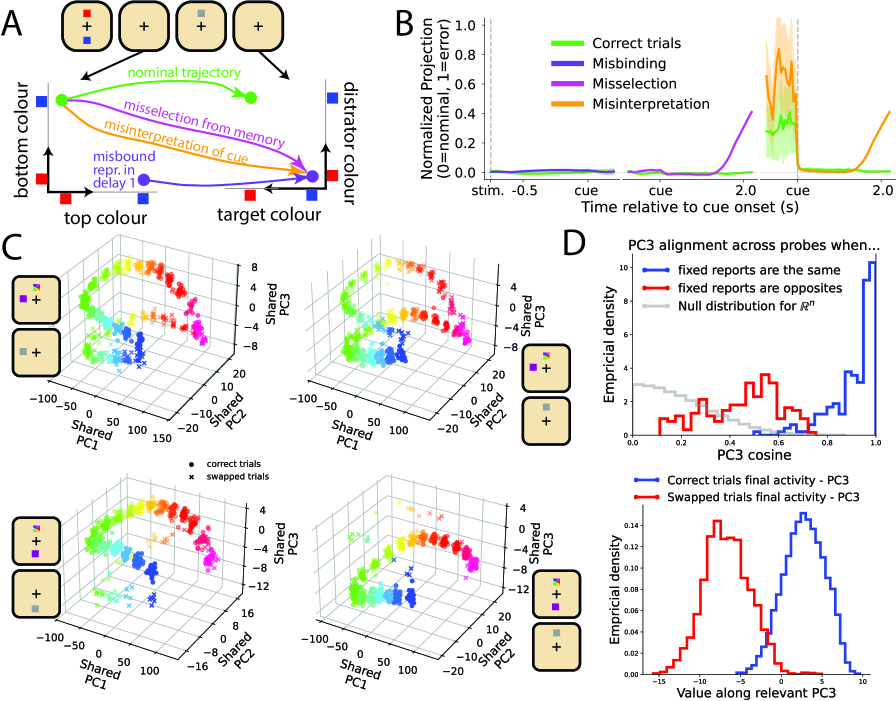

- Biology Demonstrates that RNNs trained to reproduce suboptimal behavior (swap errors) successfully recapitulate qualitative neural signatures (e.g., planar alignment of population activity) observed in macaque prefrontal cortex during visual working memory tasks, which task-optimal networks fail to capture.

主要结论

- RNNs trained with the novel diffusion-based method to reproduce probe-distance-dependent swap errors successfully matched the planar alignment geometry of neural population activity observed in macaque PFC (cosine similarity increase during cue period, as in Panichello et al., 2021), a signature not captured by task-optimal or no-swap-error models.

- The method accurately replicated target swap error rates as a function of distractor proximity (as defined by the generative BNS model), demonstrating quantitative fitting to complex behavioral distributions.

- The approach generated novel, testable hypotheses about the neural circuit mechanisms underlying swap errors (e.g., misselection at cue time), moving beyond descriptive population coding models.

摘要: Discovering the neural mechanisms underpinning cognition is one of the grand challenges of neuroscience. However, previous approaches for building models of recurrent neural network (RNN) dynamics that explain behaviour required iterative refinement of architectures and/or optimization objectives, resulting in a piecemeal, and mostly heuristic, human-in-the-loop process. Here, we offer an alternative approach that automates the discovery of viable RNN mechanisms by explicitly training RNNs to reproduce behaviour, including the same characteristic errors and suboptimalities, that humans and animals produce in a cognitive task. Achieving this required two main innovations. First, as the amount of behavioural data that can be collected in experiments is often too limited to train RNNs, we use a non-parametric generative model of behavioural responses to produce surrogate data for training RNNs. Second, to capture all relevant statistical aspects of the data, rather than a limited number of hand-picked low-order moments as in previous moment-matching-based approaches, we developed a novel diffusion model-based approach for training RNNs. To showcase the potential of our approach, we chose a visual working memory task as our test-bed, as behaviour in this task is well known to produce response distributions that are patently multimodal (due to so-called swap errors). The resulting network dynamics correctly predicted previously reported qualitative features of neural data recorded in macaques. Importantly, these results were not possible to obtain with more traditional approaches, i.e., when only a limited set of behavioural signatures (rather than the full richness of behavioural response distributions) were fitted, or when RNNs were trained for task optimality (instead of reproducing behaviour). Our approach also yields novel predictions about the mechanism of swap errors, which can be readily tested in experiments. These results suggest that fitting RNNs to rich patterns of behaviour provides a powerful way to automatically discover the neural network dynamics supporting important cognitive functions.