Paper List

-

Formation of Artificial Neural Assemblies by Biologically Plausible Inhibition Mechanisms

This work addresses the core limitation of the Assembly Calculus model—its fixed-size, biologically implausible k-WTA selection process—by introducing...

-

How to make the most of your masked language model for protein engineering

This paper addresses the critical bottleneck of efficiently sampling high-quality, diverse protein sequences from Masked Language Models (MLMs) for pr...

-

Module control in youth symptom networks across COVID-19

This paper addresses the core challenge of distinguishing whether a prolonged societal stressor (COVID-19) fundamentally reorganizes the architecture ...

-

JEDI: Jointly Embedded Inference of Neural Dynamics

This paper addresses the core challenge of inferring context-dependent neural dynamics from noisy, high-dimensional recordings using a single unified ...

-

ATP Level and Phosphorylation Free Energy Regulate Trigger-Wave Speed and Critical Nucleus Size in Cellular Biochemical Systems

This work addresses the core challenge of quantitatively predicting how the cellular energy state (ATP level and phosphorylation free energy) governs ...

-

Packaging Jupyter notebooks as installable desktop apps using LabConstrictor

This paper addresses the core pain point of ensuring Jupyter notebook reproducibility and accessibility across different computing environments, parti...

-

SNPgen: Phenotype-Supervised Genotype Representation and Synthetic Data Generation via Latent Diffusion

This paper addresses the core challenge of generating privacy-preserving synthetic genotype data that maintains both statistical fidelity and downstre...

-

Continuous Diffusion Transformers for Designing Synthetic Regulatory Elements

This paper addresses the challenge of efficiently generating novel, cell-type-specific regulatory DNA sequences with high predicted activity while min...

Emergent Bayesian Behaviour and Optimal Cue Combination in LLMs

Huawei Noah’s Ark Lab, London, UK | AI Centre, Department of Computer Science, University College London, London, UK

30秒速读

IN SHORT: This paper addresses the critical gap in understanding whether LLMs spontaneously develop human-like Bayesian strategies for processing uncertain information, revealing that high accuracy does not guarantee robust multimodal integration.

核心创新

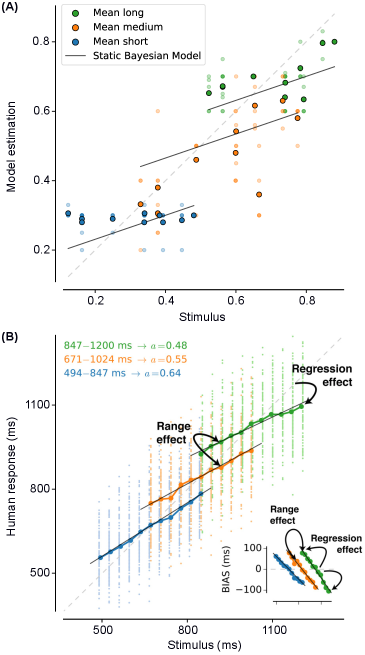

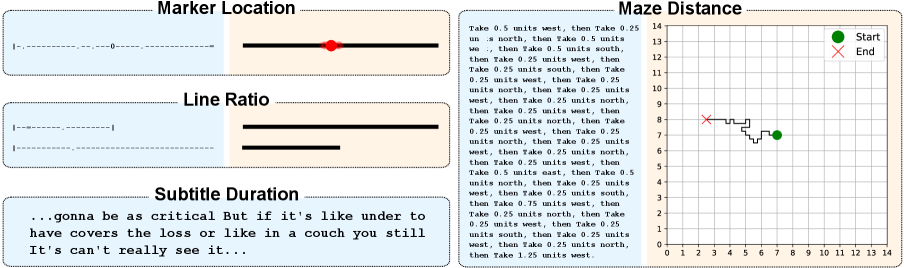

- Methodology Introduces BayesBench, the first psychophysics-inspired behavioral benchmark for LLMs with four magnitude estimation tasks (length, location, distance, duration) across text and image modalities.

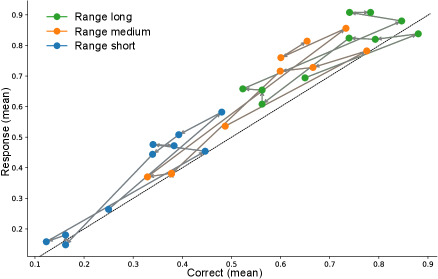

- Methodology Develops Bayesian Consistency Score (BCS) to detect Bayes-consistent behavioral shifts even when accuracy saturates, enabling separation of capability from computational strategy.

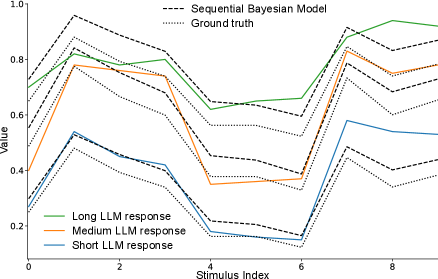

- Biology Demonstrates emergent Bayesian behavior in capable LLMs without explicit training, with Llama-4 Maverick showing cue-combination efficiency exceeding human biological systems (RRE > 1 against Bayesian oracle).

主要结论

- GPT-5 Mini achieves perfect text accuracy (NRMSE ≈ 0) but fails to integrate visual cues efficiently, showing poor cue-combination efficiency (RRE < 1) despite high capability.

- Llama-4 Maverick demonstrates emergent Bayesian behavior with cue-combination efficiency exceeding Bayesian reliability-weighted baselines (RRE > 1), suggesting non-linear integration strategies.

- Bayesian Consistency Score reveals that more accurate models show stronger evidence of Bayesian behavior, with BCS positively correlated with accuracy across nine evaluated LLMs.

摘要: Large language models (LLMs) excel at explicit reasoning, but their implicit computational strategies remain underexplored. Decades of psychophysics research show that humans intuitively process and integrate noisy signals using near-optimal Bayesian strategies in perceptual tasks. We ask whether LLMs exhibit similar behaviour and perform optimal multimodal integration without explicit training or instruction. Adopting the psychophysics paradigm, we infer computational principles of LLMs from systematic behavioural studies. We introduce a behavioural benchmark - BayesBench: four magnitude estimation tasks (length, location, distance, and duration) over text and image, inspired by classic psychophysics, and evaluate a diverse set of nine LLMs alongside human judgments for calibration. Through controlled ablations of noise, context, and instruction prompts, we measure performance, behaviour and efficiency in multimodal cue-combination. Beyond accuracy and efficiency metrics, we introduce a Bayesian Consistency Score that detects Bayes-consistent behavioural shifts even when accuracy saturates. Our results show that while capable models often adapt in Bayes-consistent ways, accuracy does not guarantee robustness. Notably, GPT-5 Mini achieves perfect text accuracy but fails to integrate visual cues efficiently. This reveals a critical dissociation between capability and strategy, suggesting accuracy-centric benchmarks may over-index on performance while missing brittle uncertainty handling. These findings reveal emergent principled handling of uncertainty and highlight the correlation between accuracy and Bayesian tendencies. We release our psychophysics benchmark and consistency metric as evaluation tools and to inform future multimodal architecture designs111Project webpage: https://bayes-bench.github.io.