Paper List

-

Evolutionarily Stable Stackelberg Equilibrium

通过要求追随者策略对突变入侵具有鲁棒性,弥合了斯塔克尔伯格领导力模型与演化稳定性之间的鸿沟。

-

Recovering Sparse Neural Connectivity from Partial Measurements: A Covariance-Based Approach with Granger-Causality Refinement

通过跨多个实验会话累积协方差统计,实现从部分记录到完整神经连接性的重建。

-

Atomic Trajectory Modeling with State Space Models for Biomolecular Dynamics

ATMOS通过提供一个基于SSM的高效框架,用于生物分子的原子级轨迹生成,弥合了计算昂贵的MD模拟与时间受限的深度生成模型之间的差距。

-

Slow evolution towards generalism in a model of variable dietary range

通过证明是种群统计噪声(而非确定性动力学)驱动了模式形成和泛化食性的演化,解决了间接竞争下物种形成的悖论。

-

Grounded Multimodal Retrieval-Augmented Drafting of Radiology Impressions Using Case-Based Similarity Search

通过将印象草稿基于检索到的历史病例,并采用明确引用和基于置信度的拒绝机制,解决放射学报告生成中的幻觉问题。

-

Unified Policy–Value Decomposition for Rapid Adaptation

通过双线性分解在策略和价值函数之间共享低维目标嵌入,实现对新颖任务的零样本适应。

-

Mathematical Modeling of Cancer–Bacterial Therapy: Analysis and Numerical Simulation via Physics-Informed Neural Networks

提供了一个严格的、无网格的PINN框架,用于模拟和分析细菌癌症疗法中复杂的、空间异质的相互作用。

-

Sample-Efficient Adaptation of Drug-Response Models to Patient Tumors under Strong Biological Domain Shift

通过从无标记分子谱中学习可迁移表征,利用最少的临床数据实现患者药物反应的有效预测。

An AI Implementation Science Study to Improve Trustworthy Data in a Large Healthcare System

Georgia Institute of Technology, Atlanta, GA, USA | Shriners Hospitals for Children, Tampa, FL, USA

30秒速读

IN SHORT: This paper addresses the critical gap between theoretical AI research and real-world clinical implementation by providing a practical framework for assessing and improving healthcare data quality using trustworthy AI principles.

核心创新

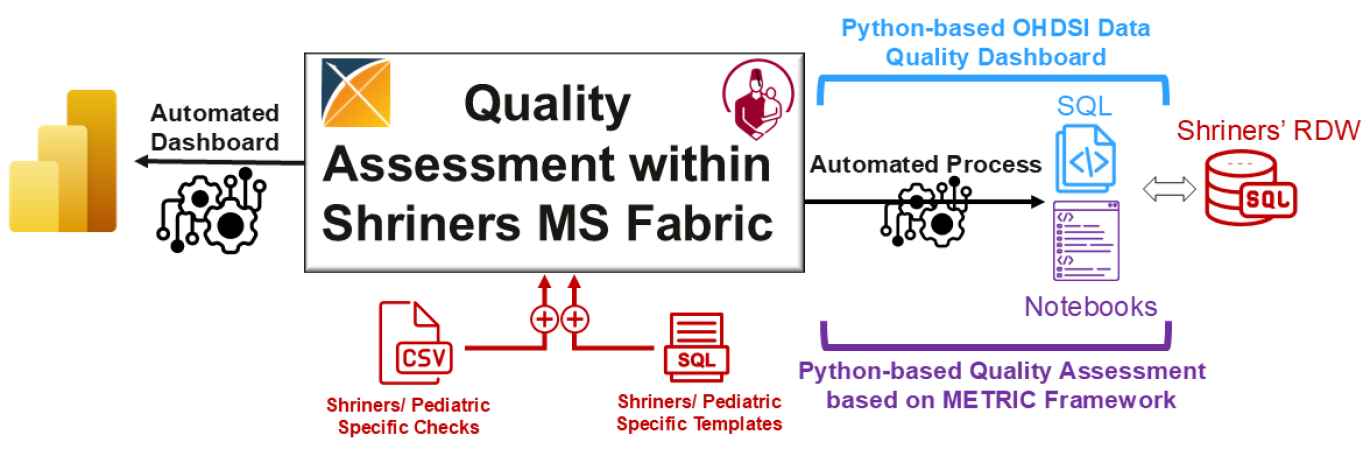

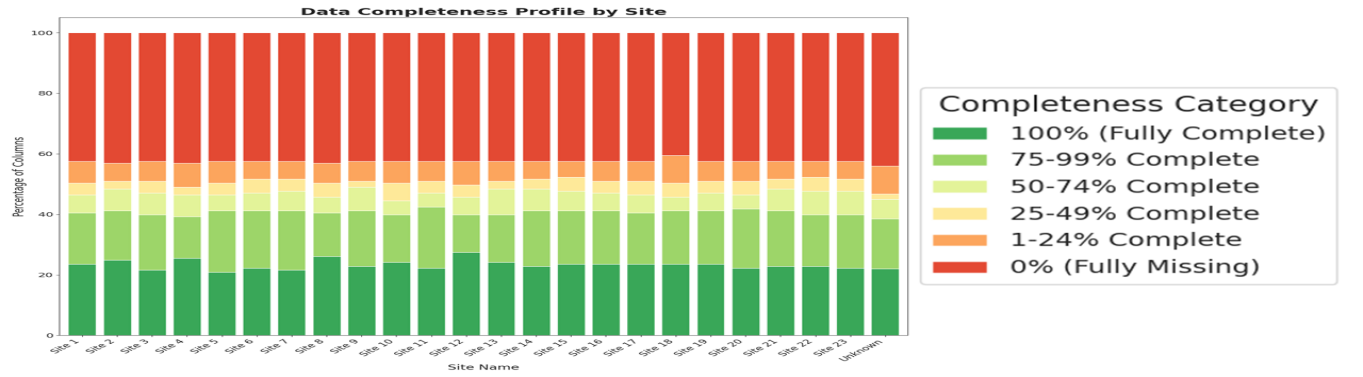

- Methodology Developed a Python-based extension of OHDSI's Data Quality Dashboard (DQD) that integrates the METRIC framework for trustworthy AI assessment, addressing informative missingness, timeliness, and distribution consistency.

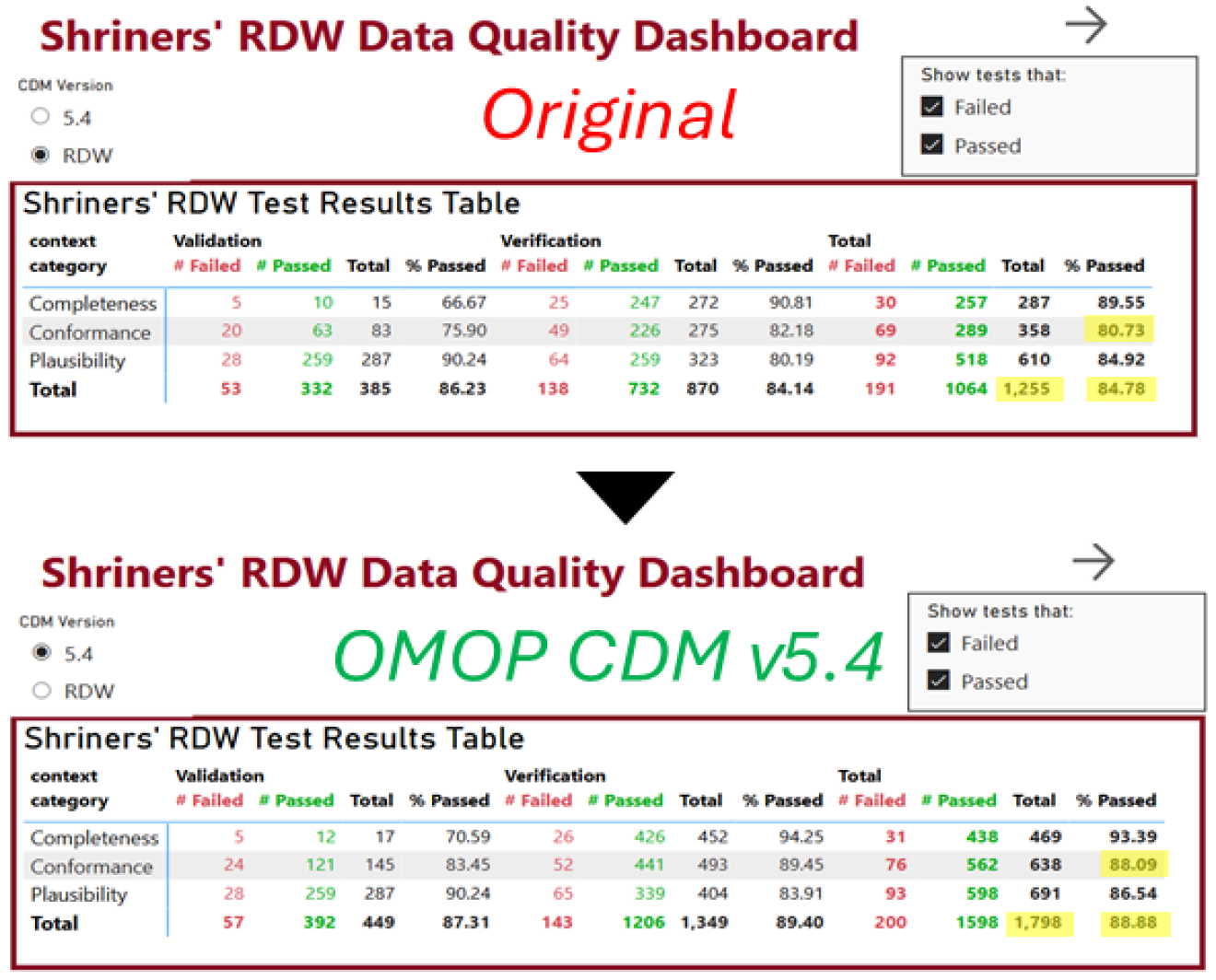

- Methodology Implemented a real-world case study modernizing a large pediatric healthcare system's Research Data Warehouse from OMOP CDM v5.1/5.2 to v5.4 within Microsoft Fabric, achieving 4% improvement in data quality test success rate (84.78% to 88.88%).

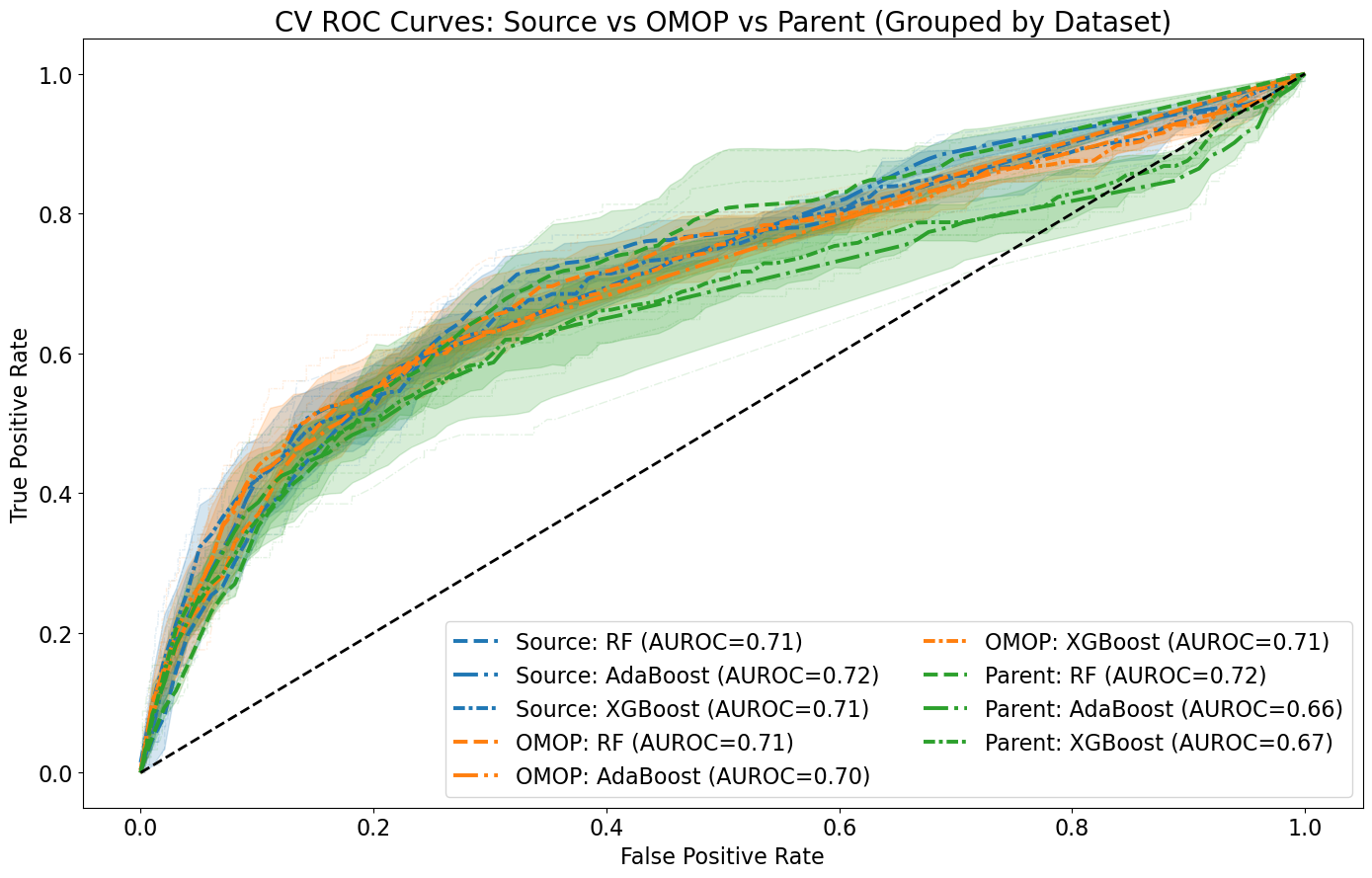

- Biology Demonstrated that data harmonization using OMOP CDM concept codes does not significantly impact AI model performance (mean AUROC: 71.3% with source codes vs. 70.0% with OMOP codes) while increasing interoperability for Craniofacial Microsomia case study.

主要结论

- Modernizing SC's OMOP CDM database from v5.1/5.2 to v5.4 improved overall data quality by 4% (84.78% to 88.88% success rate) and conformance by 8% (80.73% to 88.09%).

- Data harmonization using OMOP CDM concept codes maintained comparable AI model performance (mean AUROC difference: 1.3%) while enabling better interoperability across healthcare systems.

- Only 50% of ICD-9 codes shared common mappings with ICD-10 codes, revealing significant vocabulary transition challenges that could degrade AI model performance when encountering mixed coding systems.

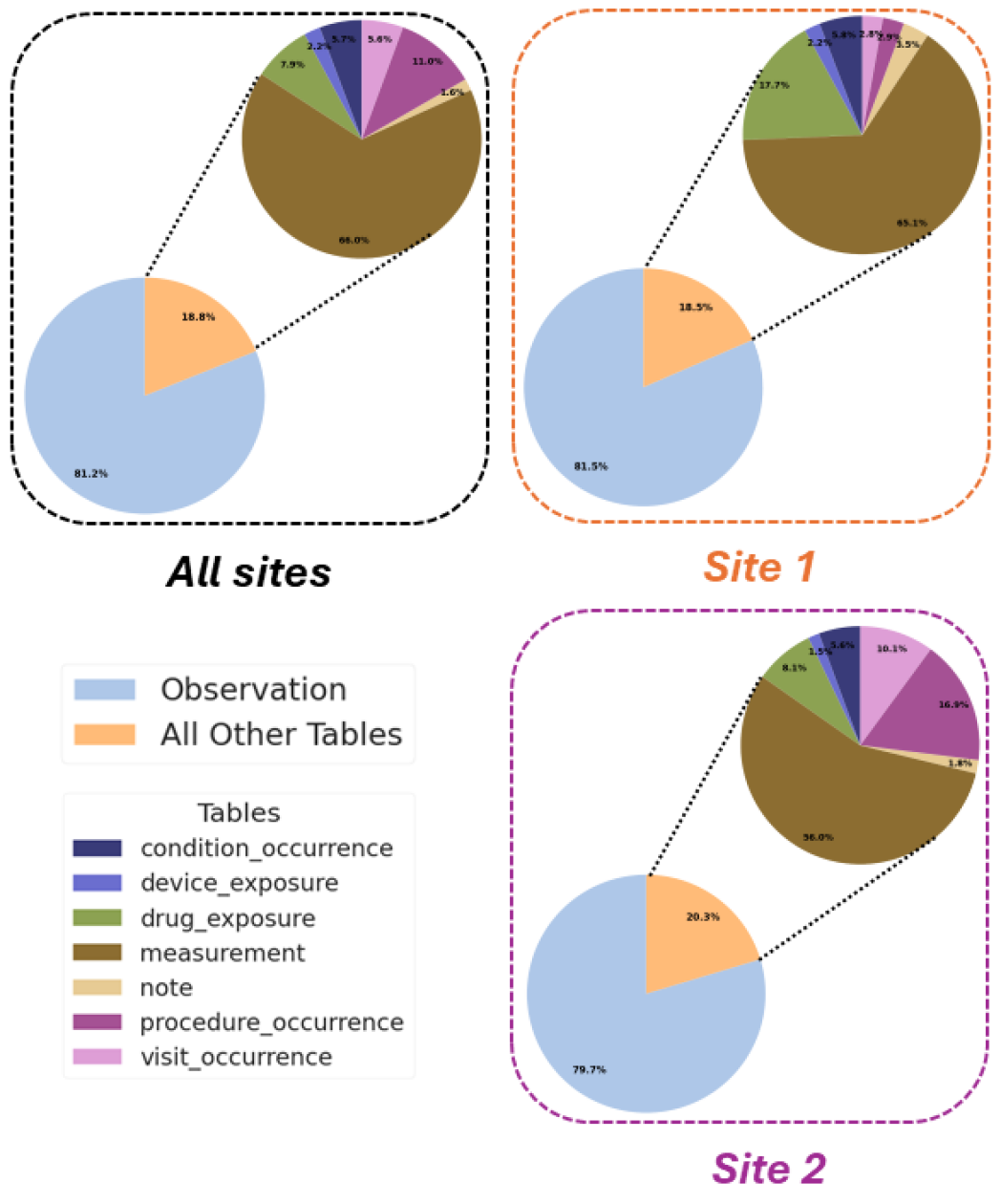

摘要: The rapid growth of Artificial Intelligence (AI) in healthcare has sparked interest in Trustworthy AI and AI Implementation Science, both of which are essential for accelerating clinical adoption. Yet, barriers such as strict regulations, gaps between research and clinical settings, and challenges in evaluating AI systems hinder real-world implementation. This study presents an AI implementation case study within Shriners Children’s (SC), a large multisite pediatric system, showcasing the modernization of SC’s Research Data Warehouse (RDW) to OMOP CDM v5.4 within a secure Microsoft Fabric environment. We introduce a Python-based data quality assessment tool compatible with SC’s infrastructure, an extension of OHDSI’s R/Java-based Data Quality Dashboard (DQD) that integrates Trustworthy AI principles using the METRIC framework. This extension enhances data quality evaluation by addressing informative missingness, redundancy, timeliness, and distributional consistency. We also compare systematic and case-specific AI implementation strategies for Craniofacial Microsomia (CFM) using the FHIR standard. Our contributions include a real-world evaluation of AI implementations, integration of Trustworthy AI in data quality assessment, and evidence-based insights into hybrid implementation strategies, highlighting the need to blend systematic infrastructure with use-case-driven approaches to advance AI in healthcare.