Paper List

-

Macroscopic Dominance from Microscopic Extremes: Symmetry Breaking in Spatial Competition

This paper addresses the fundamental question of how microscopic stochastic advantages in spatial exploration translate into macroscopic resource domi...

-

Linear Readout of Neural Manifolds with Continuous Variables

This paper addresses the core challenge of quantifying how the geometric structure of high-dimensional neural population activity (neural manifolds) d...

-

Theory of Cell Body Lensing and Phototaxis Sign Reversal in “Eyeless” Mutants of Chlamydomonas

This paper solves the core puzzle of how eyeless mutants of Chlamydomonas exhibit reversed phototaxis by quantitatively modeling the competition betwe...

-

Cross-Species Transfer Learning for Electrophysiology-to-Transcriptomics Mapping in Cortical GABAergic Interneurons

This paper addresses the challenge of predicting transcriptomic identity from electrophysiological recordings in human cortical interneurons, where li...

-

Uncovering statistical structure in large-scale neural activity with Restricted Boltzmann Machines

This paper addresses the core challenge of modeling large-scale neural population activity (1500-2000 neurons) with interpretable higher-order interac...

-

Realizing Common Random Numbers: Event-Keyed Hashing for Causally Valid Stochastic Models

This paper addresses the critical problem that standard stateful PRNG implementations in agent-based models violate causal validity by making random d...

-

A Standardized Framework for Evaluating Gene Expression Generative Models

This paper addresses the critical lack of standardized evaluation protocols for single-cell gene expression generative models, where inconsistent metr...

-

Single Molecule Localization Microscopy Challenge: A Biologically Inspired Benchmark for Long-Sequence Modeling

This paper addresses the core challenge of evaluating state-space models on biologically realistic, sparse, and stochastic temporal processes, which a...

Emergent Bayesian Behaviour and Optimal Cue Combination in LLMs

Huawei Noah’s Ark Lab, London, UK | AI Centre, Department of Computer Science, University College London, London, UK

30秒速读

IN SHORT: This paper addresses the critical gap in understanding whether LLMs spontaneously develop human-like Bayesian strategies for processing uncertain information, revealing that high accuracy does not guarantee robust multimodal integration.

核心创新

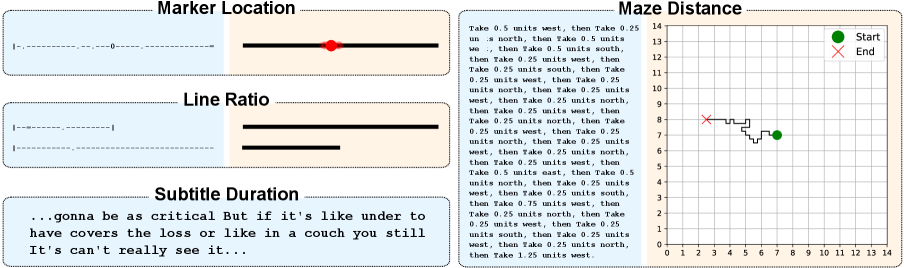

- Methodology Introduces BayesBench, the first psychophysics-inspired behavioral benchmark for LLMs with four magnitude estimation tasks (length, location, distance, duration) across text and image modalities.

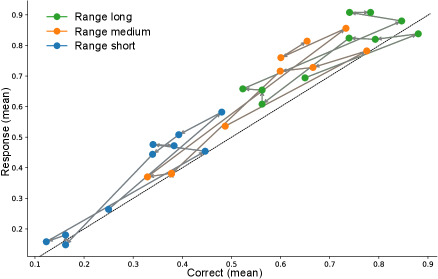

- Methodology Develops Bayesian Consistency Score (BCS) to detect Bayes-consistent behavioral shifts even when accuracy saturates, enabling separation of capability from computational strategy.

- Biology Demonstrates emergent Bayesian behavior in capable LLMs without explicit training, with Llama-4 Maverick showing cue-combination efficiency exceeding human biological systems (RRE > 1 against Bayesian oracle).

主要结论

- GPT-5 Mini achieves perfect text accuracy (NRMSE ≈ 0) but fails to integrate visual cues efficiently, showing poor cue-combination efficiency (RRE < 1) despite high capability.

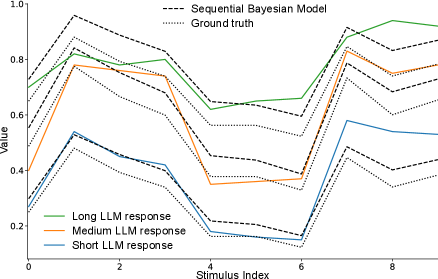

- Llama-4 Maverick demonstrates emergent Bayesian behavior with cue-combination efficiency exceeding Bayesian reliability-weighted baselines (RRE > 1), suggesting non-linear integration strategies.

- Bayesian Consistency Score reveals that more accurate models show stronger evidence of Bayesian behavior, with BCS positively correlated with accuracy across nine evaluated LLMs.

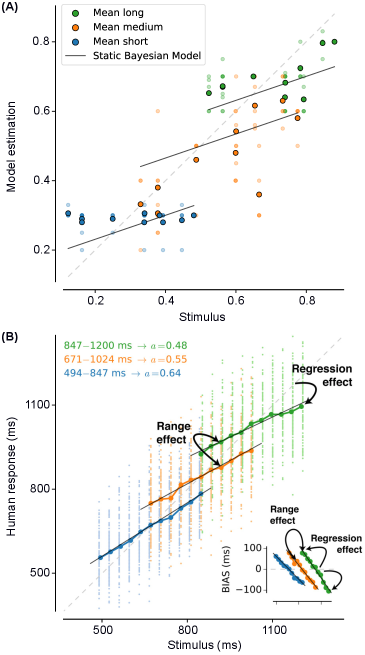

摘要: Large language models (LLMs) excel at explicit reasoning, but their implicit computational strategies remain underexplored. Decades of psychophysics research show that humans intuitively process and integrate noisy signals using near-optimal Bayesian strategies in perceptual tasks. We ask whether LLMs exhibit similar behaviour and perform optimal multimodal integration without explicit training or instruction. Adopting the psychophysics paradigm, we infer computational principles of LLMs from systematic behavioural studies. We introduce a behavioural benchmark - BayesBench: four magnitude estimation tasks (length, location, distance, and duration) over text and image, inspired by classic psychophysics, and evaluate a diverse set of nine LLMs alongside human judgments for calibration. Through controlled ablations of noise, context, and instruction prompts, we measure performance, behaviour and efficiency in multimodal cue-combination. Beyond accuracy and efficiency metrics, we introduce a Bayesian Consistency Score that detects Bayes-consistent behavioural shifts even when accuracy saturates. Our results show that while capable models often adapt in Bayes-consistent ways, accuracy does not guarantee robustness. Notably, GPT-5 Mini achieves perfect text accuracy but fails to integrate visual cues efficiently. This reveals a critical dissociation between capability and strategy, suggesting accuracy-centric benchmarks may over-index on performance while missing brittle uncertainty handling. These findings reveal emergent principled handling of uncertainty and highlight the correlation between accuracy and Bayesian tendencies. We release our psychophysics benchmark and consistency metric as evaluation tools and to inform future multimodal architecture designs111Project webpage: https://bayes-bench.github.io.