Paper List

-

Macroscopic Dominance from Microscopic Extremes: Symmetry Breaking in Spatial Competition

This paper addresses the fundamental question of how microscopic stochastic advantages in spatial exploration translate into macroscopic resource domi...

-

Linear Readout of Neural Manifolds with Continuous Variables

This paper addresses the core challenge of quantifying how the geometric structure of high-dimensional neural population activity (neural manifolds) d...

-

Theory of Cell Body Lensing and Phototaxis Sign Reversal in “Eyeless” Mutants of Chlamydomonas

This paper solves the core puzzle of how eyeless mutants of Chlamydomonas exhibit reversed phototaxis by quantitatively modeling the competition betwe...

-

Cross-Species Transfer Learning for Electrophysiology-to-Transcriptomics Mapping in Cortical GABAergic Interneurons

This paper addresses the challenge of predicting transcriptomic identity from electrophysiological recordings in human cortical interneurons, where li...

-

Uncovering statistical structure in large-scale neural activity with Restricted Boltzmann Machines

This paper addresses the core challenge of modeling large-scale neural population activity (1500-2000 neurons) with interpretable higher-order interac...

-

Realizing Common Random Numbers: Event-Keyed Hashing for Causally Valid Stochastic Models

This paper addresses the critical problem that standard stateful PRNG implementations in agent-based models violate causal validity by making random d...

-

A Standardized Framework for Evaluating Gene Expression Generative Models

This paper addresses the critical lack of standardized evaluation protocols for single-cell gene expression generative models, where inconsistent metr...

-

Single Molecule Localization Microscopy Challenge: A Biologically Inspired Benchmark for Long-Sequence Modeling

This paper addresses the core challenge of evaluating state-space models on biologically realistic, sparse, and stochastic temporal processes, which a...

Setting up for failure: automatic discovery of the neural mechanisms of cognitive errors

Department of Engineering, University of Cambridge | Department of Psychology, University of Cambridge | Department of Cognitive Science, Central European University

30秒速读

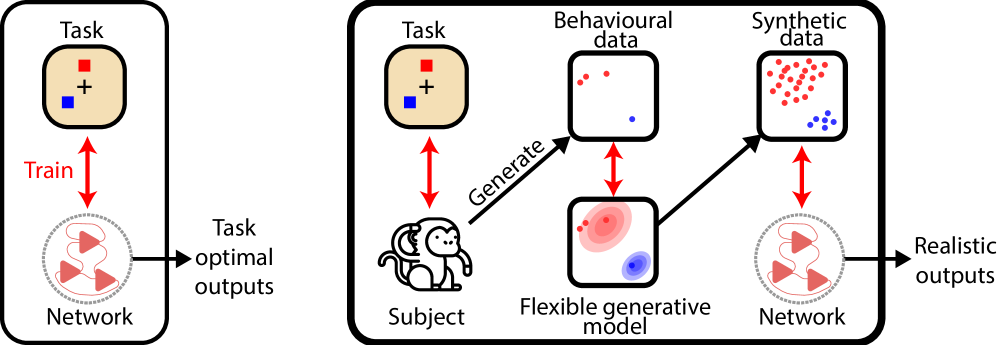

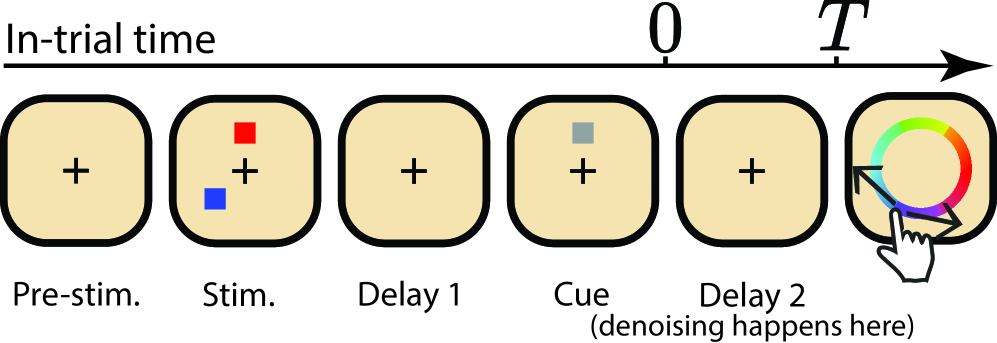

IN SHORT: This paper addresses the core challenge of automating the discovery of biologically plausible recurrent neural network (RNN) dynamics that can replicate the full richness of human and animal behavioral data, including characteristic errors and suboptimalities, rather than just optimal task performance.

核心创新

- Methodology Introduces a novel diffusion model-based training objective for RNNs to capture complex, multimodal behavioral response distributions (e.g., from swap errors), moving beyond traditional moment-matching or simple loss functions like MSE.

- Methodology Proposes using a non-parametric generative model (Bayesian Non-parametric model of Swap errors, BNS) to create surrogate behavioral data for training, overcoming the data scarcity problem inherent in experimental neuroscience.

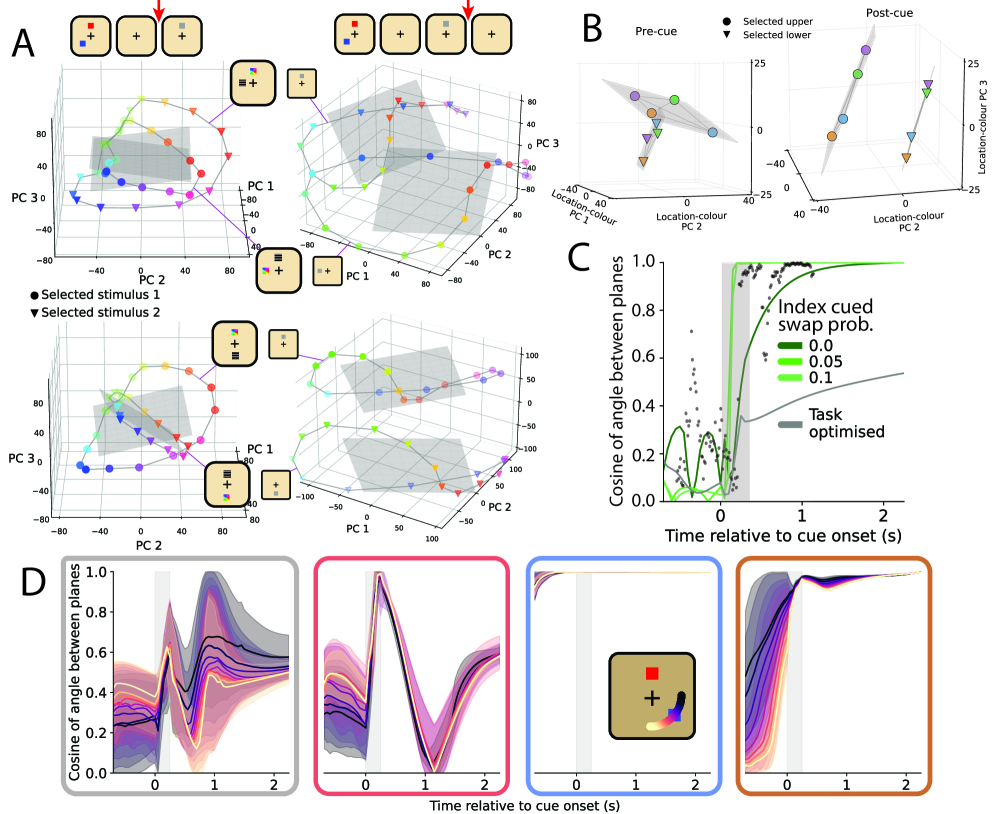

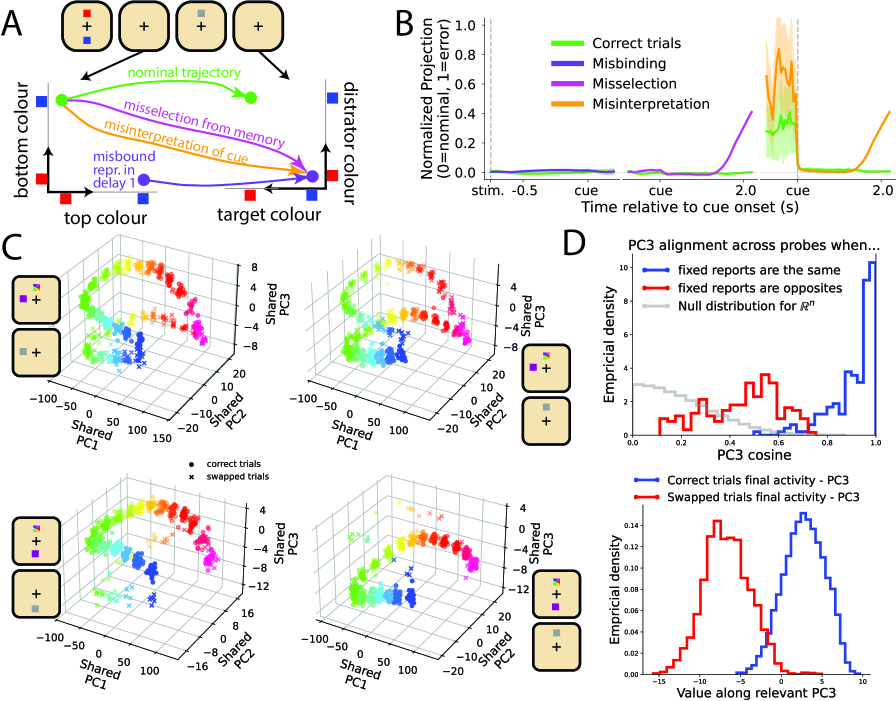

- Biology Demonstrates that RNNs trained to reproduce suboptimal behavior (swap errors) successfully recapitulate qualitative neural signatures (e.g., planar alignment of population activity) observed in macaque prefrontal cortex during visual working memory tasks, which task-optimal networks fail to capture.

主要结论

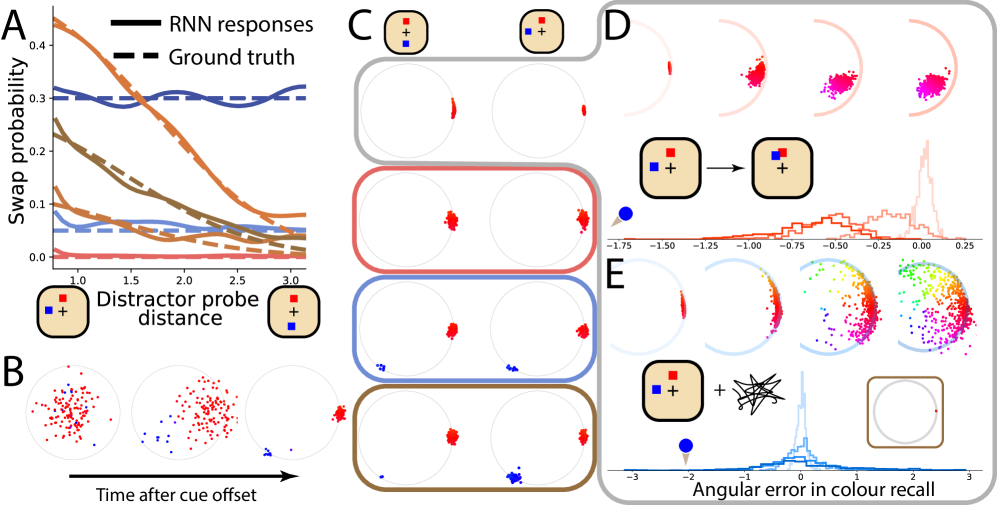

- RNNs trained with the novel diffusion-based method to reproduce probe-distance-dependent swap errors successfully matched the planar alignment geometry of neural population activity observed in macaque PFC (cosine similarity increase during cue period, as in Panichello et al., 2021), a signature not captured by task-optimal or no-swap-error models.

- The method accurately replicated target swap error rates as a function of distractor proximity (as defined by the generative BNS model), demonstrating quantitative fitting to complex behavioral distributions.

- The approach generated novel, testable hypotheses about the neural circuit mechanisms underlying swap errors (e.g., misselection at cue time), moving beyond descriptive population coding models.

摘要: Discovering the neural mechanisms underpinning cognition is one of the grand challenges of neuroscience. However, previous approaches for building models of recurrent neural network (RNN) dynamics that explain behaviour required iterative refinement of architectures and/or optimization objectives, resulting in a piecemeal, and mostly heuristic, human-in-the-loop process. Here, we offer an alternative approach that automates the discovery of viable RNN mechanisms by explicitly training RNNs to reproduce behaviour, including the same characteristic errors and suboptimalities, that humans and animals produce in a cognitive task. Achieving this required two main innovations. First, as the amount of behavioural data that can be collected in experiments is often too limited to train RNNs, we use a non-parametric generative model of behavioural responses to produce surrogate data for training RNNs. Second, to capture all relevant statistical aspects of the data, rather than a limited number of hand-picked low-order moments as in previous moment-matching-based approaches, we developed a novel diffusion model-based approach for training RNNs. To showcase the potential of our approach, we chose a visual working memory task as our test-bed, as behaviour in this task is well known to produce response distributions that are patently multimodal (due to so-called swap errors). The resulting network dynamics correctly predicted previously reported qualitative features of neural data recorded in macaques. Importantly, these results were not possible to obtain with more traditional approaches, i.e., when only a limited set of behavioural signatures (rather than the full richness of behavioural response distributions) were fitted, or when RNNs were trained for task optimality (instead of reproducing behaviour). Our approach also yields novel predictions about the mechanism of swap errors, which can be readily tested in experiments. These results suggest that fitting RNNs to rich patterns of behaviour provides a powerful way to automatically discover the neural network dynamics supporting important cognitive functions.