Paper List

-

Developing the PsyCogMetrics™ AI Lab to Evaluate Large Language Models and Advance Cognitive Science

This paper addresses the critical gap between sophisticated LLM evaluation needs and the lack of accessible, scientifically rigorous platforms that in...

-

Equivalence of approximation by networks of single- and multi-spike neurons

This paper resolves the fundamental question of whether single-spike spiking neural networks (SNNs) are inherently less expressive than multi-spike SN...

-

The neuroscience of transformers

提出了Transformer架构与皮层柱微环路之间的新颖计算映射,连接了现代AI与神经科学。

-

Framing local structural identifiability and observability in terms of parameter-state symmetries

This paper addresses the core challenge of systematically determining which parameters and states in a mechanistic ODE model can be uniquely inferred ...

-

Leveraging Phytolith Research using Artificial Intelligence

This paper addresses the critical bottleneck in phytolith research by automating the labor-intensive manual microscopy process through a multimodal AI...

-

Neural network-based encoding in free-viewing fMRI with gaze-aware models

This paper addresses the core challenge of building computationally efficient and ecologically valid brain encoding models for naturalistic vision by ...

-

Scalable DNA Ternary Full Adder Enabled by a Competitive Blocking Circuit

This paper addresses the core bottleneck of carry information attenuation and limited computational scale in DNA binary adders by introducing a scalab...

-

ELISA: An Interpretable Hybrid Generative AI Agent for Expression-Grounded Discovery in Single-Cell Genomics

This paper addresses the critical bottleneck of translating high-dimensional single-cell transcriptomic data into interpretable biological hypotheses ...

An AI Implementation Science Study to Improve Trustworthy Data in a Large Healthcare System

Georgia Institute of Technology, Atlanta, GA, USA | Shriners Hospitals for Children, Tampa, FL, USA

30秒速读

IN SHORT: This paper addresses the critical gap between theoretical AI research and real-world clinical implementation by providing a practical framework for assessing and improving healthcare data quality using trustworthy AI principles.

核心创新

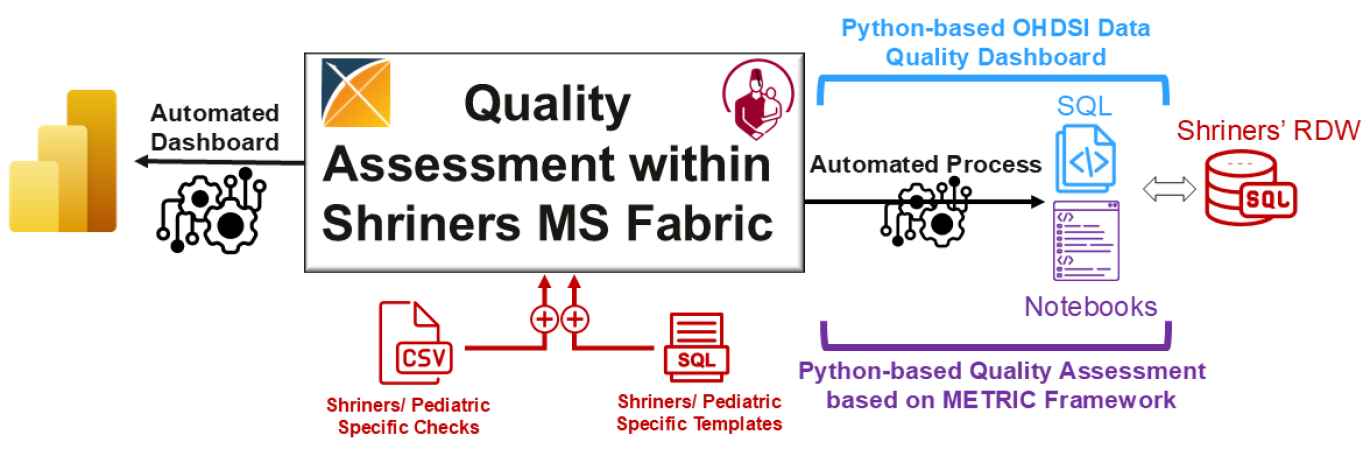

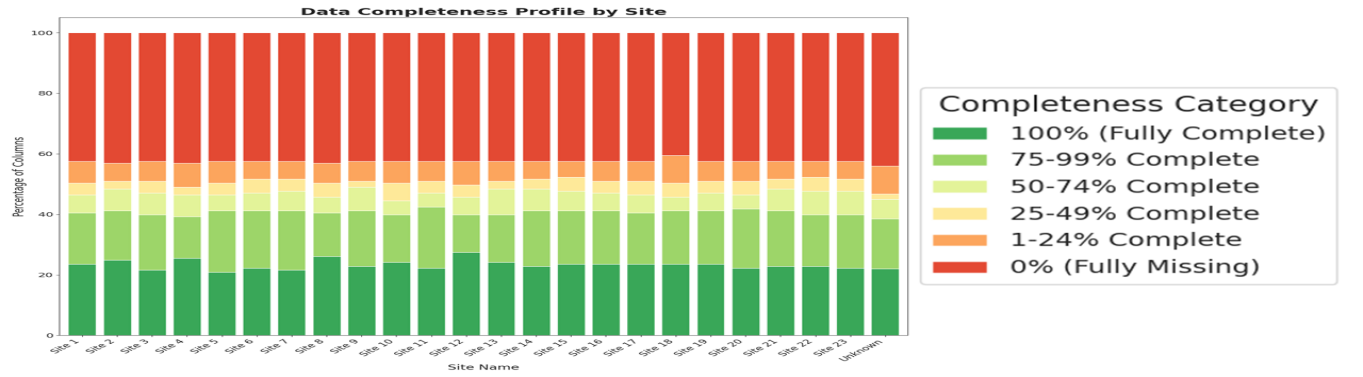

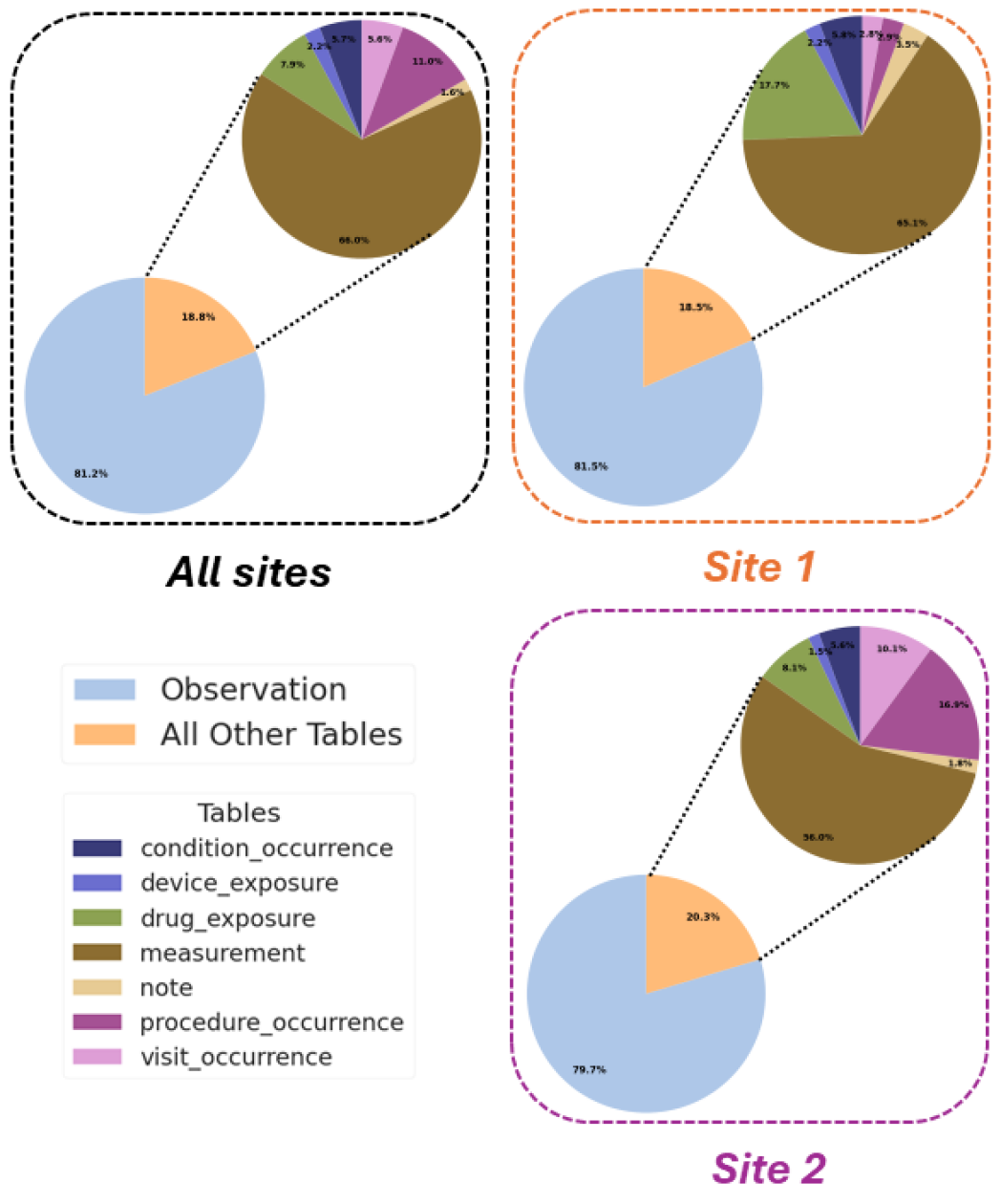

- Methodology Developed a Python-based extension of OHDSI's Data Quality Dashboard (DQD) that integrates the METRIC framework for trustworthy AI assessment, addressing informative missingness, timeliness, and distribution consistency.

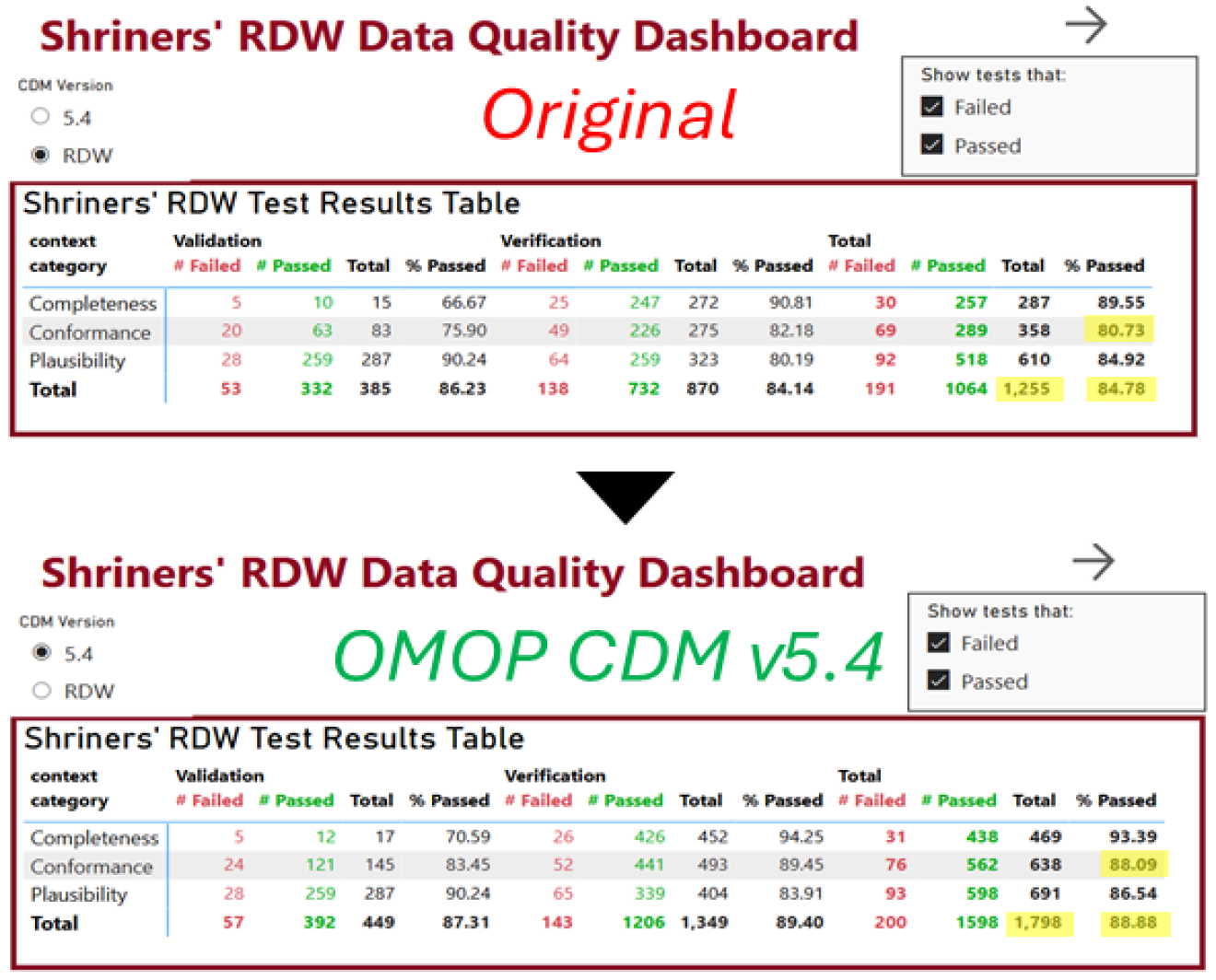

- Methodology Implemented a real-world case study modernizing a large pediatric healthcare system's Research Data Warehouse from OMOP CDM v5.1/5.2 to v5.4 within Microsoft Fabric, achieving 4% improvement in data quality test success rate (84.78% to 88.88%).

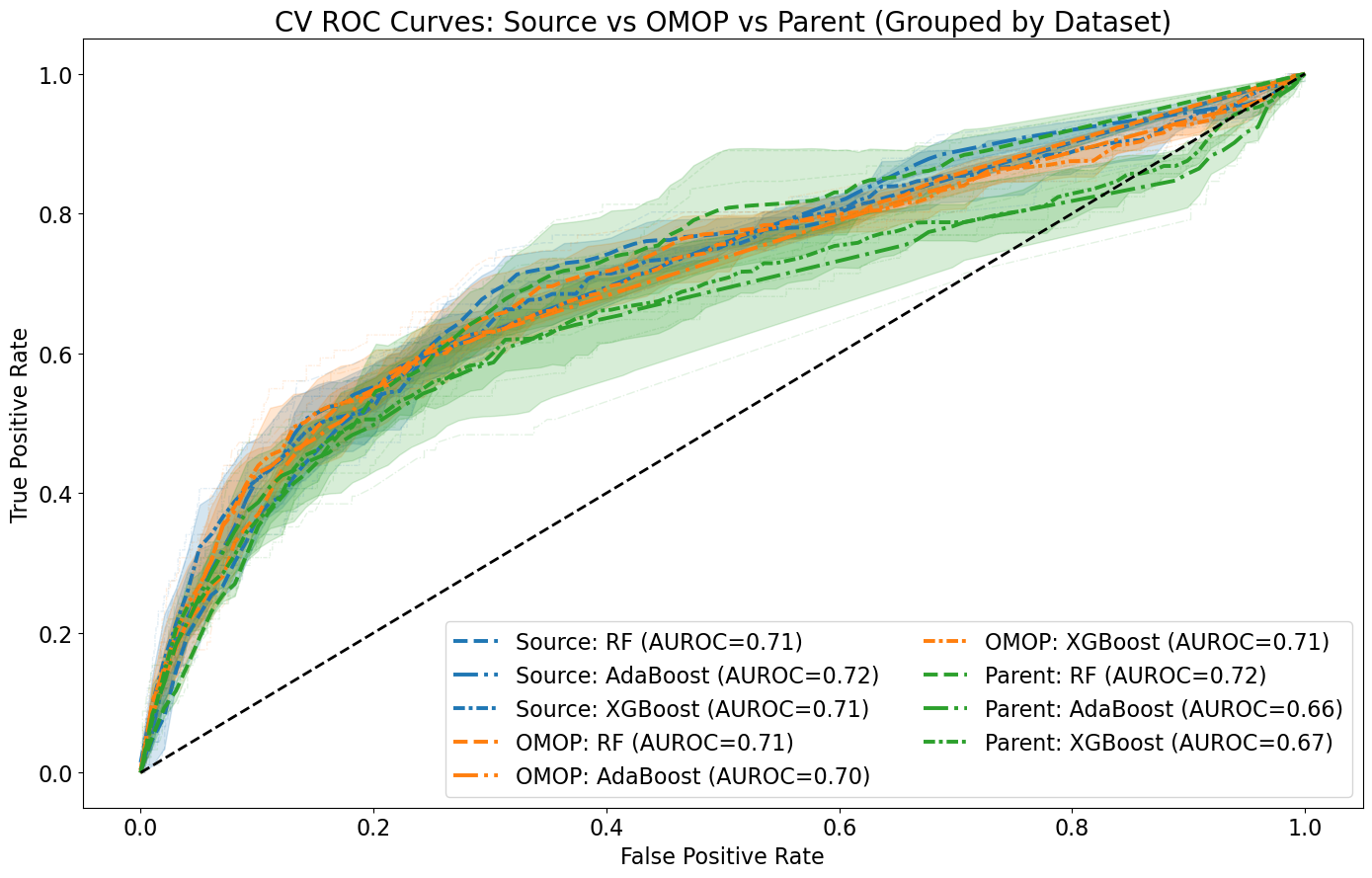

- Biology Demonstrated that data harmonization using OMOP CDM concept codes does not significantly impact AI model performance (mean AUROC: 71.3% with source codes vs. 70.0% with OMOP codes) while increasing interoperability for Craniofacial Microsomia case study.

主要结论

- Modernizing SC's OMOP CDM database from v5.1/5.2 to v5.4 improved overall data quality by 4% (84.78% to 88.88% success rate) and conformance by 8% (80.73% to 88.09%).

- Data harmonization using OMOP CDM concept codes maintained comparable AI model performance (mean AUROC difference: 1.3%) while enabling better interoperability across healthcare systems.

- Only 50% of ICD-9 codes shared common mappings with ICD-10 codes, revealing significant vocabulary transition challenges that could degrade AI model performance when encountering mixed coding systems.

摘要: The rapid growth of Artificial Intelligence (AI) in healthcare has sparked interest in Trustworthy AI and AI Implementation Science, both of which are essential for accelerating clinical adoption. Yet, barriers such as strict regulations, gaps between research and clinical settings, and challenges in evaluating AI systems hinder real-world implementation. This study presents an AI implementation case study within Shriners Children’s (SC), a large multisite pediatric system, showcasing the modernization of SC’s Research Data Warehouse (RDW) to OMOP CDM v5.4 within a secure Microsoft Fabric environment. We introduce a Python-based data quality assessment tool compatible with SC’s infrastructure, an extension of OHDSI’s R/Java-based Data Quality Dashboard (DQD) that integrates Trustworthy AI principles using the METRIC framework. This extension enhances data quality evaluation by addressing informative missingness, redundancy, timeliness, and distributional consistency. We also compare systematic and case-specific AI implementation strategies for Craniofacial Microsomia (CFM) using the FHIR standard. Our contributions include a real-world evaluation of AI implementations, integration of Trustworthy AI in data quality assessment, and evidence-based insights into hybrid implementation strategies, highlighting the need to blend systematic infrastructure with use-case-driven approaches to advance AI in healthcare.